Mitigating Semantic Collapsing Problem in Generative Personalization with Test-time Embedding Adjustment

Table of Contents

- Table of Contents

- Abstract

- Motivation

- Contributions

- Semantic Collapsing Problem (Witch Hunting)

- Test-Time Embedding Adjustment

- Surprising Impact of TEA on Anti-DreamBooth

- Experiments

- Citation

- References

Abstract

In this paper, we investigate the semantic collapsing problem in generative personalization, an under-explored topic where the learned visual concept (\(V^*\)) gradually shifts from its original textual meaning and comes to dominate other concepts in multi-concept input prompts. This issue not only reduces the semantic richness of complex input prompts like “a photo of \(V^*\) wearing glasses and playing guitar” into simpler, less contextually rich forms such as “a photo of \(V^*\)” but also leads to simplified output images that fail to capture the intended concept. We identify the root cause as unconstrained optimisation, which allows the learned embedding \(V^*\) to drift arbitrarily in the embedding space, both in direction and magnitude. To address this, we propose a simple yet effective training-free method that adjusts the magnitude and direction of pre-trained embedding at inference time, effectively mitigating the semantic collapsing problem. Our method is broadly applicable across different personalization methods and demonstrates significant improvements in text-image alignment in diverse use cases. Our code is published at https://github.com/tuananhbui89/Embedding-Adjustment.

Motivation

Misalignment between the input prompt and the generated output is a grand challenge in generative personalization. While the goal is to faithfully preserve the personalized concept and simultaneously respect the semantic content of the prompt, existing methods often fail to achieve this balance. For instance, DreamBooth [1] introduces a class-specific prior preservation loss to mitigate overfitting, while other approaches seek to disentangle the personalized concept from co-occurring or background elements in the reference set [6, 7]. Recent works further attempt to regulate semantic fidelity through regularization strategies [4, 5] or compositional disentanglement [2, 3].

Contributions

In this paper, we make three contributions that I personally really proud of:

- We first highlight the semantic collapsing problem in generative personalization, which is under-explored in the literature. We show that this phenomenon is driven by the unconstrained optimization during finetuning of personalization. To be best of our knowledge, this is the first work that explicitly points out this problem.

- We propose a training-free method that adjusts the embedding of the personalized concept at inference time, effectively mitigating the semantic collapsing problem. This is a simple, general, and yet very effective approach, and first of its kind :D.

- We make a connection between SCP and Anti-DreamBooth, showing why Anti-DreamBooth works and how TEA can be applied to partially reverse the Anti-DreamBooth effect and restore the protected concept. While several works have shown the weak security of Anti-Personalization frameworks, but most of them focus on the preprocessing phase (i.e., removing the invisible mask in the data, therefore, these data still can be personalized), our work is the first to show the vulnerability of Anti-Personalization frameworks in post-processing phase (i.e., given a thought-to-be-protected model, we can still recover the protected concept).

Semantic Collapsing Problem (Witch Hunting)

The flow of the paper is a bit odd, that is, I start by hypothesizing the problem, then propose method to show its existence, and finally propose the solution (TEA). While my colleagues argued with me with that flow (problem, solution, explain), I decided to keep it like that (problem, explain, solution) because I believe that when everyone knows the problem, the solution is very straight-forward.

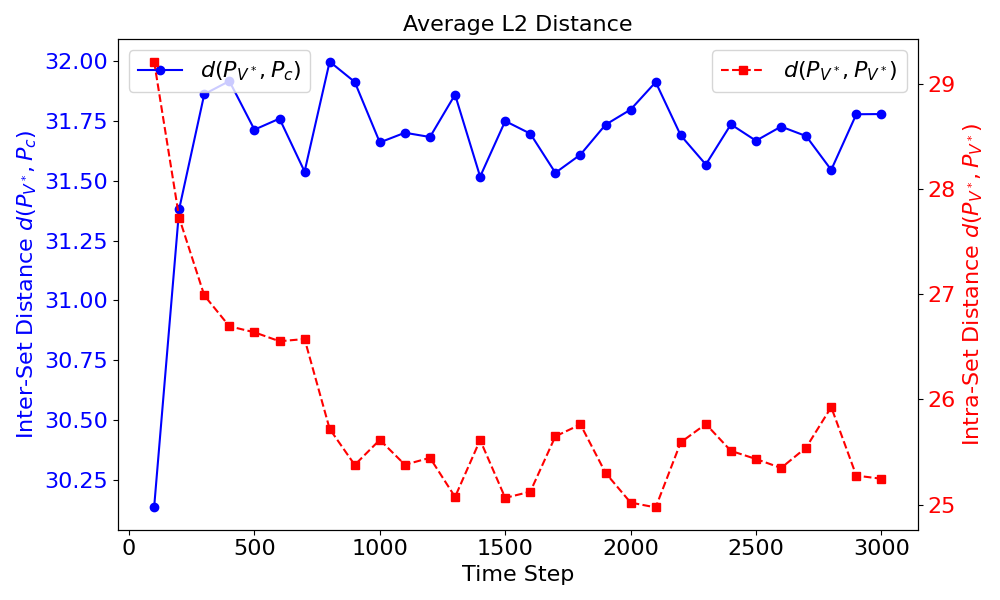

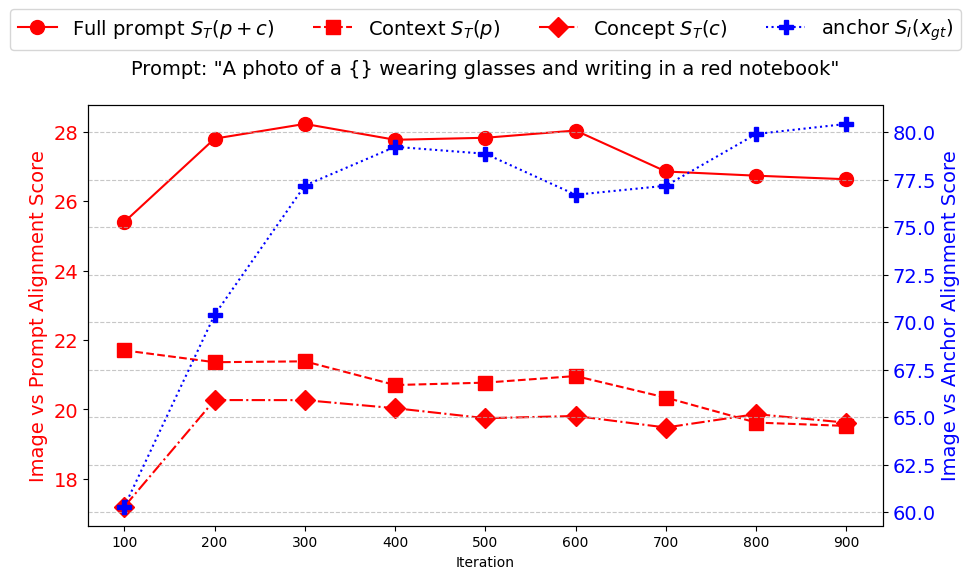

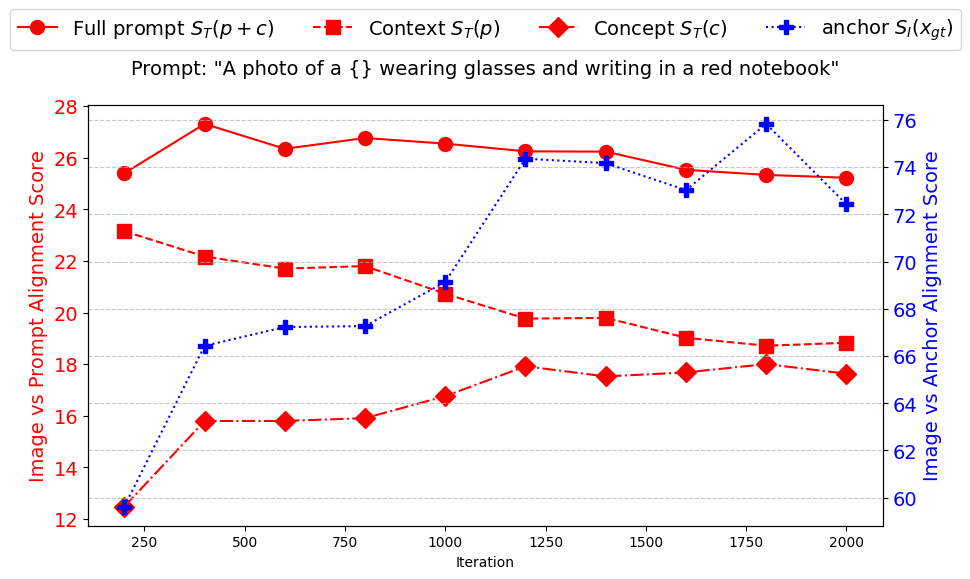

In this section, we present empirical evidence supporting the existence of the semantic collapsing problem and its impact on generation quality. Our key findings are as follows:

#1. Existence of SCP. SCP exists in the textual domain, where the prompt \(\lfloor p, V^* \rfloor\) is dominated by the learned embedding \(V^*\) and the semantic meaning of the entire prompt gradually collapses to the learned embedding \(V^*\), i.e., \(\tau(\lfloor p, V^* \rfloor) \rightarrow \tau(V^*)\).

#2. Negative Impact on Generation Quality. SCP leads to the degradation/misalignment in generation quality in the image space, i.e., \(G(\lfloor p, V^* \rfloor) \rightarrow G(V^*)\), particularly for prompts with complex semantic structures.

#3. Surprisingly Positive Impact. SCP can also lead to the positive impact on generation quality, particularly for prompts where the concept \(c\) requires a strong visual presence to be recognisable.

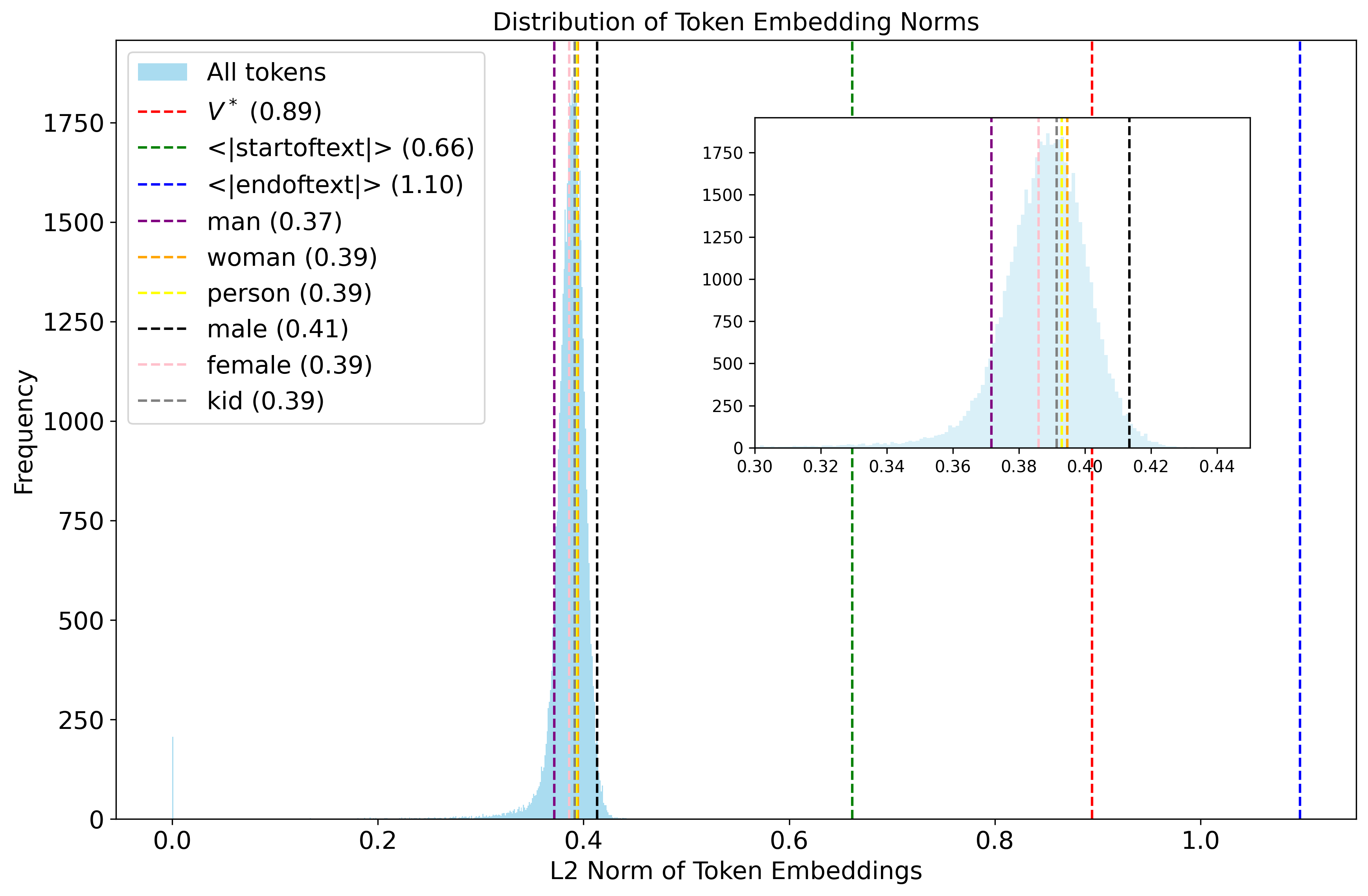

#4. Root Cause of SCP. SCP arises from unconstrained optimisation during personalization, which leads to arbitrary shifts (both in magnitude and direction) in the embedding of \(V^*\) away from its original semantic concept \(c\).

Test-Time Embedding Adjustment

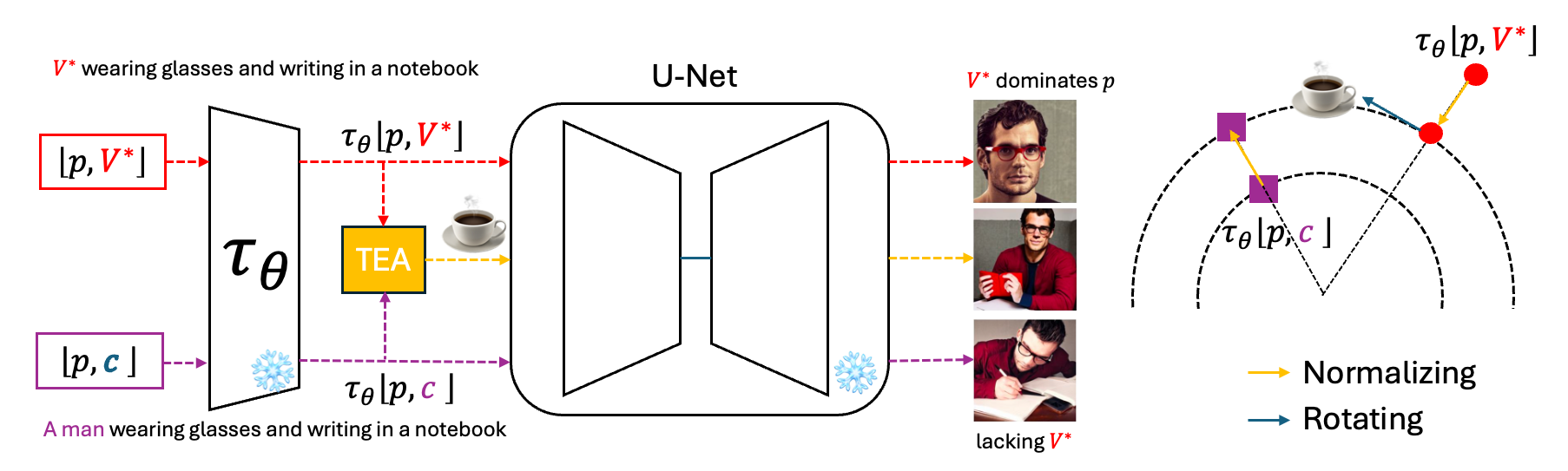

Given the root cause of SCP, it is naturally rised the question: Can we reverse this semantic shift at test time by adjusting \(V^*\), without modifying the personalization method? Surprisingly, the answer is yes, with a surprisingly simple, general, and yet very effective approach.

Embedding Adjustment

Given a pre-trained embedding matrix \(M\) that includes a learned token \(V^*\) (as in Textual Inversion), and a target concept \(c\) toward which we wish to regularise, we propose to adjust \(M_{V^*}\) by aligning both its magnitude and direction with \(M_c\). This is achieved by first normalising the vectors and then applying Spherical Linear Interpolation (SLERP) to interpolate the direction of \(M_{V^*}\) towards \(M_c\), which is effective in high-dimensional vector spaces.

\[\hat{M}_{V^*} = \frac{\sin((1-\alpha)\theta)}{\sin(\theta)} \tilde{M}_{V^*} + \frac{\sin(\alpha\theta)}{\sin(\theta)} \tilde{M}_c\]Here, \(\theta\) is the angle between the normalized vectors \(\tilde{M}_c\) and \(\tilde{M}_{V^*}\), and \(\alpha \in [0, 1]\) controls the rotation factor, where the bigger \(\alpha\) is, the more the embedding is rotated towards \(M_c\).

The normalisation vectors are defined as:

- \[\tilde{M}_{V^*} = \beta \left\| M_c \right\| \frac{M_{V^*}}{\left\| M_{V^*} \right\|}\]

- \[\tilde{M}_c = \beta \left\| M_c \right\| \frac{M_c}{\left\| M_c \right\|}\]

where \(\beta\) is the scaling factor to control the magnitude of the embedding relative to the reference concept \(c\).

In Dreambooth-based personalization, because the embedding matrix \(M\) is not updated during the optimisation, we propose to adjust at the prompt level instead of the token level as illustrated in the figure above. More specifically, given a prompt \(\lfloor p, V^* \rfloor\) and a target prompt \(\lfloor p, c \rfloor\), we obtain the two embeddings \(\tau(\lfloor p, V^* \rfloor)\) and \(\tau(\lfloor p, c \rfloor)\) from the text encoder \(\tau_{\phi}\) and then adjust the embedding of \(\lfloor p, V^* \rfloor\) by using the above equation on every token in the prompt.

\[\hat{\tau}(\lfloor p, V^* \rfloor)[i] = \frac{\sin((1-\alpha)\theta_i)}{\sin(\theta_i)} \tilde{\tau}(\lfloor p, V^* \rfloor)[i] + \frac{\sin(\alpha\theta_i)}{\sin(\theta_i)} \tilde{\tau}(\lfloor p, c \rfloor)[i]\]where \(i\) indexes each token in the prompt, and \(\theta_i\) is the angle between the \(i\)-th token embeddings of the two prompts after normalisation.

This method enables a test-time adjustment of semantic drift without retraining, making it a lightweight and broadly applicable solution to mitigating SCP effects.

Surprising Impact of TEA on Anti-DreamBooth

Due to the space constraints in the paper, I could not include the complete picture of the following findings that I think are very interesting and worth sharing. So with the flexibility of this blog post, I will share the complete picture of the connection between SCP and Anti-DreamBooth and how TEA can be applied to partially reverse the Anti-DreamBooth effect and restore the protected concept.

Negative Impact of Generative Personalization

While generative personalization shows a lot of potential impact when a person can obtain a personalized model for their own use with their imagine, just like in a “Dream” when they can generate whatever they want. However, there are also some negative impacts that malicious users (think like your ex or an unknown scammer) who can collect your personal images quite easily on social media platforms like Facebook, Instagram, etc. Then just like you, they can also use the same personalization method to create a personalized model for their own use with your personal images.

Unlike your “Good Dream”, they can bring you “nightmare” by generating images that are not only not what you want, but also harmful to you or your loved ones. Below are the images that I attacks myself with DreamBooth on SDXL-1.0 model, with some tattoos on my face, but I think you understand what possible can go wrong with this.

Anti-DreamBooth - When Adversarial Learning for Good

Motivation of Anti-DreamBooth or Anti-Personalization frameworks is to prevent misuse of the generated images by malicious users. It can be done by adding a invisible mask to the user’s images before publishing them to the internet, expecting that malicious attackers cannot use the images to train a personalized model to generate harmful images (e.g., nude images) of the person.

The principle of learning the invisible mask is a min-max game between the defender and the attacker, which is formulated as:

\[\begin{align} \begin{split} \delta^{*(i)} = & \text{argmax}_{\delta^{(i)}} \mathcal{L}_{cond}(\theta^*, x^{(i)} + \delta^{(i)}), \forall i \in \{1,..,N_{db}\},\\ \text{s.t.} \quad & \theta^* = \text{argmin}_{\theta} \sum_{i=1}^{N_{db}} \mathcal{L}_{db}(\theta, x^{(i)} + \delta^{(i)}),\\ \text{and} \quad & \Vert \delta^{(i)} \Vert_p \leq \eta \quad \forall i \in \{1,..,N_{db}\}, \end{split} \end{align}\]where \(\theta^*\) is the model parameters, \(\mathcal{L}_{cond}\) is the conditional loss function, \(\mathcal{L}_{db}\) is the DreamBooth’s loss function, and \(\eta\) is the constraint on the magnitude of the perturbation.

The conditional loss can be defined via reconstruction and prior losses:

\[\begin{equation} \mathbb{E}_{\mathbf{x}, \mathbf{c}, \mathbf{\epsilon}, \mathbf{\epsilon}^{'},t} \left[ \underbrace{w_t \| \hat{\mathbf{x}}_\theta (\alpha_t \mathbf{x} + \sigma_t \mathbf{\epsilon}, \mathbf{c}) - \mathbf{x}\|_2^2}_{\mathcal{L}_{recon}} + \lambda \underbrace{ w_{t^{'}} \| \hat{\mathbf{x}}_\theta(\alpha_{t^{'}} \mathbf{x}_\text{pr} + \sigma_{t^{'}} \mathbf{\epsilon}^{'}, \mathbf{c}_\text{pr}) - \mathbf{x}_\text{pr} \|_2^2}_{\mathcal{L}_{prior}} \right] \end{equation}\]A defender vs. attacker game played in two nested steps:

The inner problem (argmin) simulates the attacker: given your perturbed photos, the attacker trains a DreamBooth model as best they can — minimizing the training loss \(\mathcal{L}_{db}\) to learn your face as accurately as possible.

The outer problem (argmax) is the defender’s move: design the perturbation \(\delta^*\) so that even the attacker’s best-trained model \(\theta^*\) suffers a high conditional loss \(\mathcal{L}_{cond}\) — meaning the model fails to generate realistic images of you.

The constraint (\(\|\delta^{(i)}\|_p \leq \eta\)) keeps the perturbation imperceptibly small, so your photos still look completely normal to a human viewer.

The conditional loss \(\mathcal{L}_{cond}\) itself has two components that the defender wants to maximize:

- \(\mathcal{L}_{recon}\): measures how well the attacker’s model can reconstruct your face — a high value means it fails.

- \(\mathcal{L}_{prior}\): measures how well the model preserves general image generation ability — included to keep the training realistic and stable.

Together, maximizing both terms ensures the attacker’s fine-tuned model cannot reproduce your likeness, even after doing its best to learn from your photos.

This is the textbook view. But does the adversarial mask actually work by preventing the model from learning the concept — or is there something more nuanced happening underneath?

The underlying mechanism of Anti-DreamBooth

While Anti-DreamBooth is empirically effective (as shown in the literature), its underlying mechanism is not as well understood as it may seem. Naively, one might assume the adversarial mask works by maximizing the DreamBooth training loss, thereby preventing the model from learning the protected concept \(V^*\). But is that actually what happens?

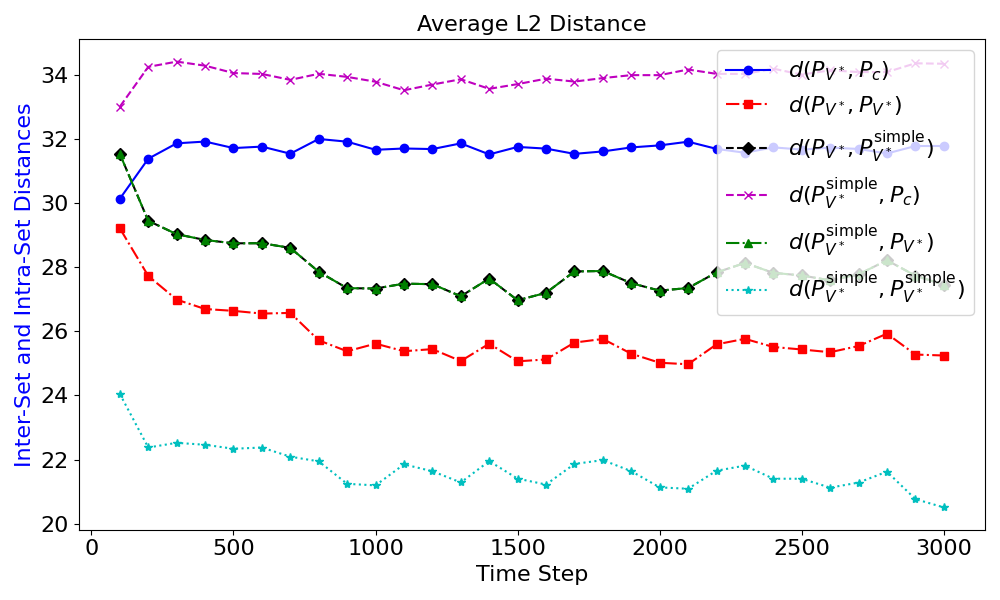

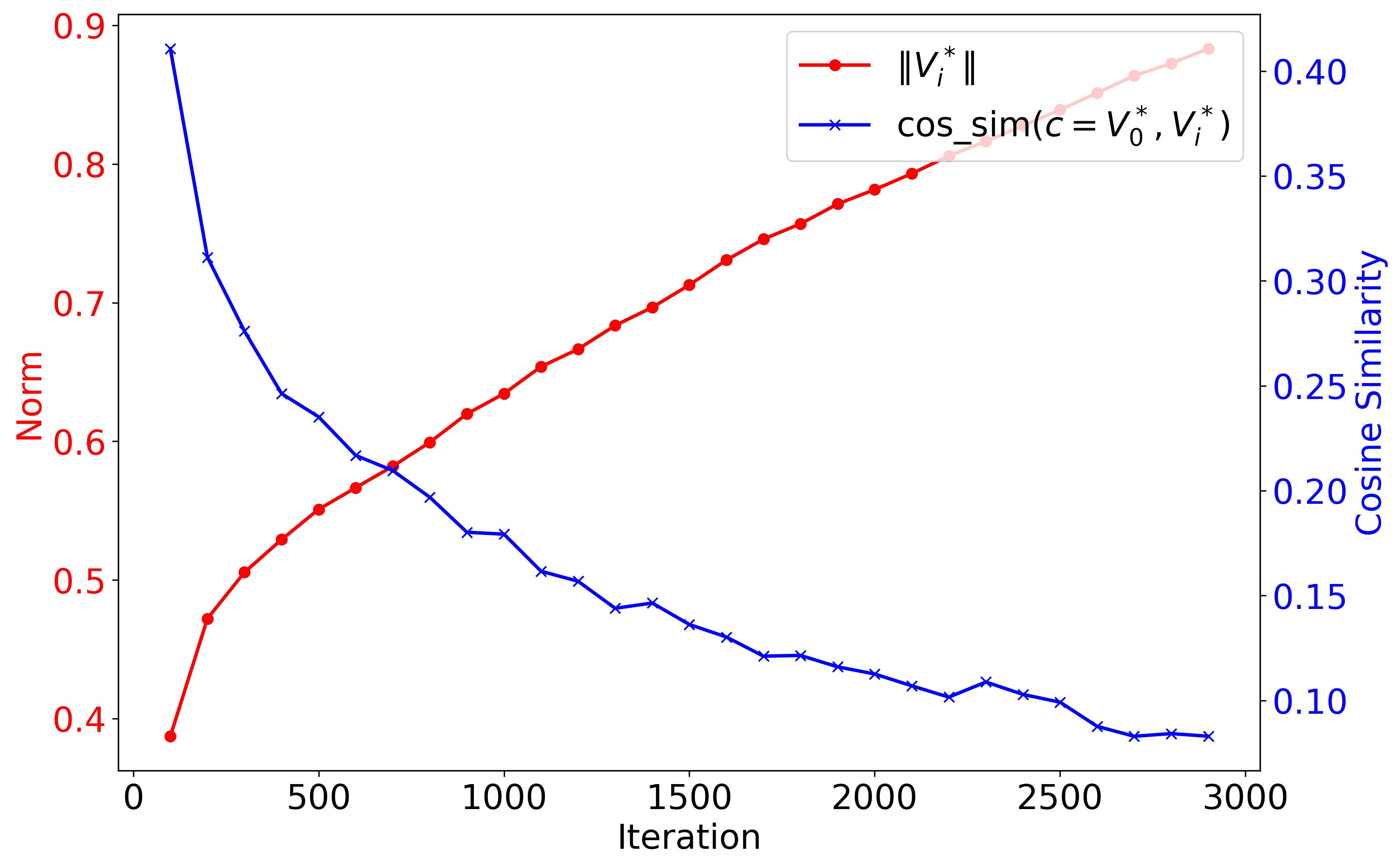

We hypothesize that the adversarial learning process of Anti-DreamBooth actually amplifies the dominance of the personalized concept \(V^*\), but with good implications for user privacy, by causing the prompt embedding \(\lfloor p, V^* \rfloor\) to drift even further from its original concept \(\lfloor p, c \rfloor\), resulting in distorted generations of the protected concept \(V^*\).

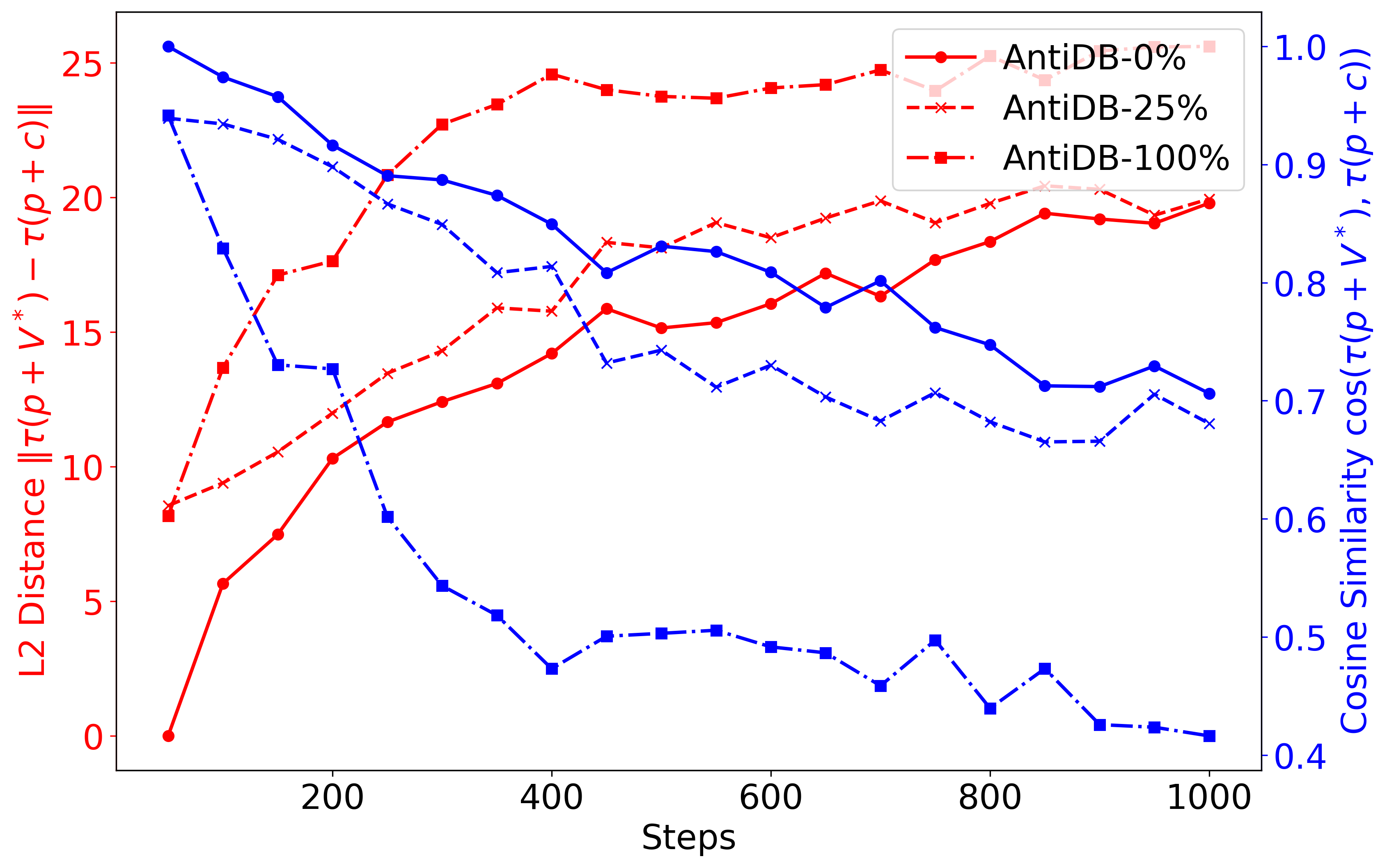

To verify this hypothesis, we conduct a controlled experiment. Given a set of benign personal images \(\{ x_i \}_{i=1}^n\), we apply Anti-DreamBooth to produce masked images \(\{ \hat{x}_i \}_{i=1}^n\). We then train DreamBooth models using mixtures of masked and benign images, with varying proportions \(p \in \{0, 0.2, 1.0\}\), where \(p=0\) corresponds to standard DreamBooth, and \(p=1.0\) uses only masked data. We then analyze the resulting prompt embeddings using the same prompt-embedding analysis described earlier. As shown in the figure below, increasing \(p\) leads to greater embedding drift, evident in the larger norm of \(\| \tau(\lfloor p, V^* \rfloor) - \tau(\lfloor p, c \rfloor) \|\) and the lower cosine similarity between \(\tau(\lfloor p, V^* \rfloor)\) and \(\tau(\lfloor p, c \rfloor)\) respectively. This result confirms our hypothesis and provides an interesting perspective on why anti-personalization works.

TEA on Anti-DreamBooth

This finding naturally raises a question: if Anti-DreamBooth’s protection is rooted in amplified semantic drift — the same phenomenon TEA is designed to address — can TEA partially undo it?

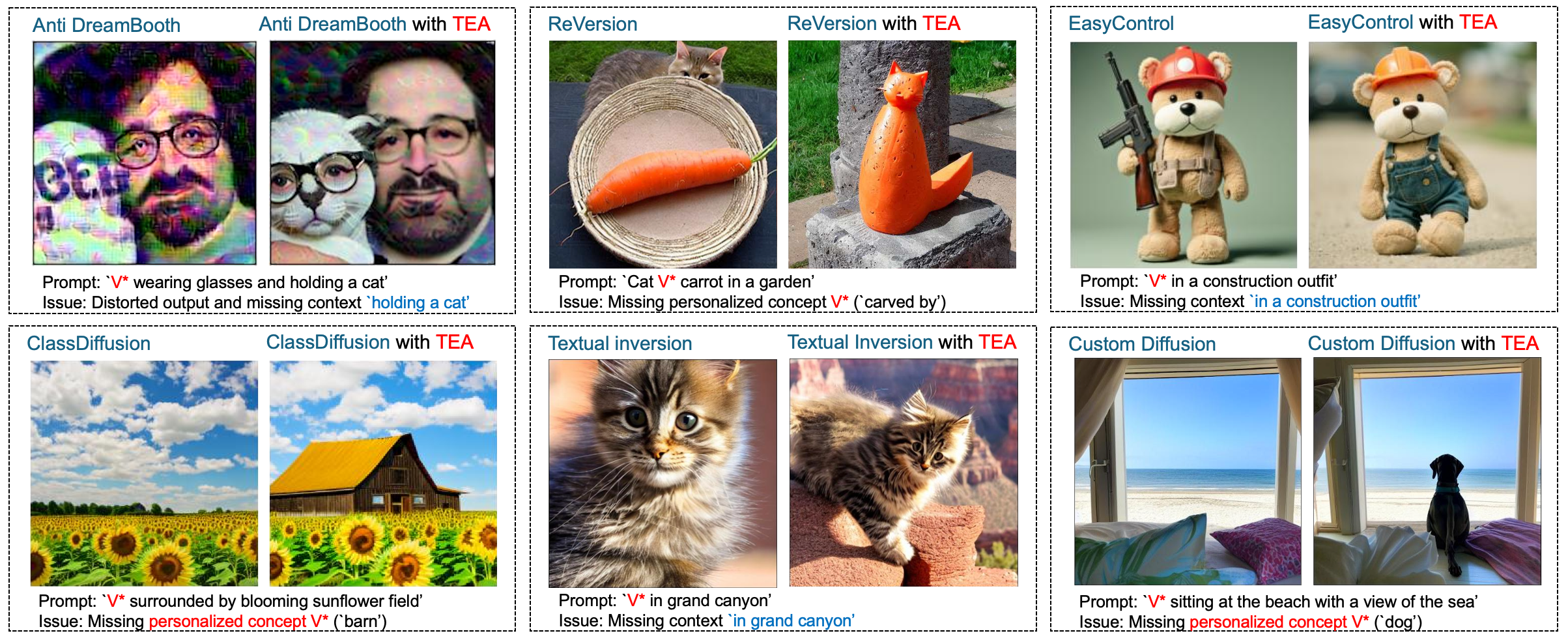

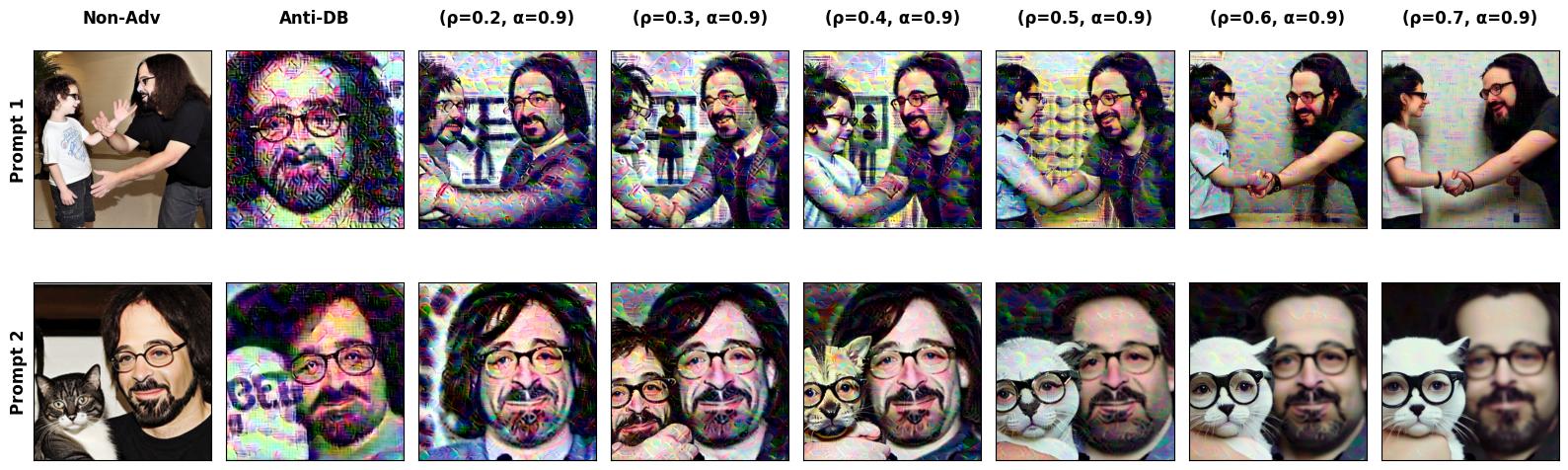

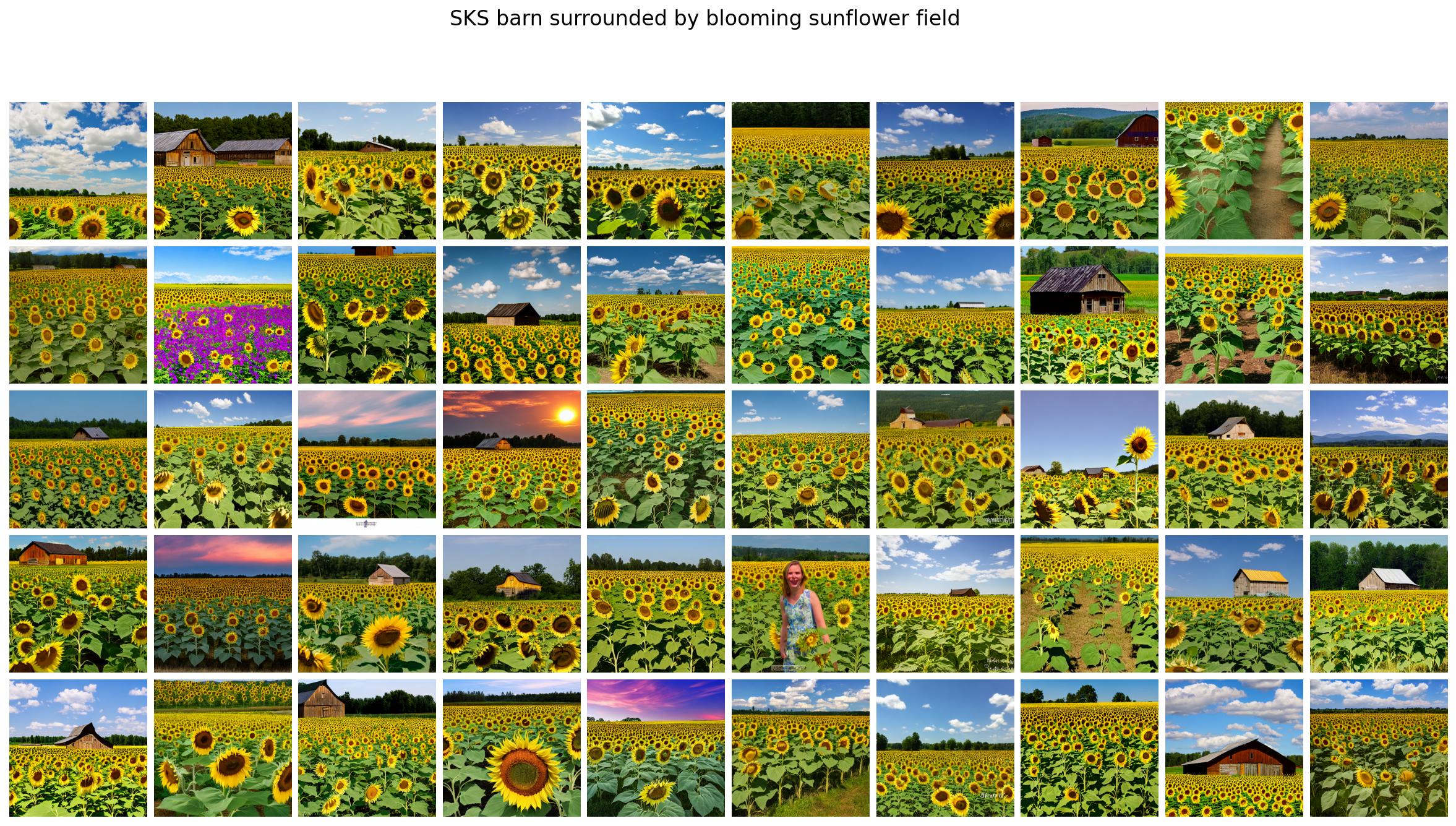

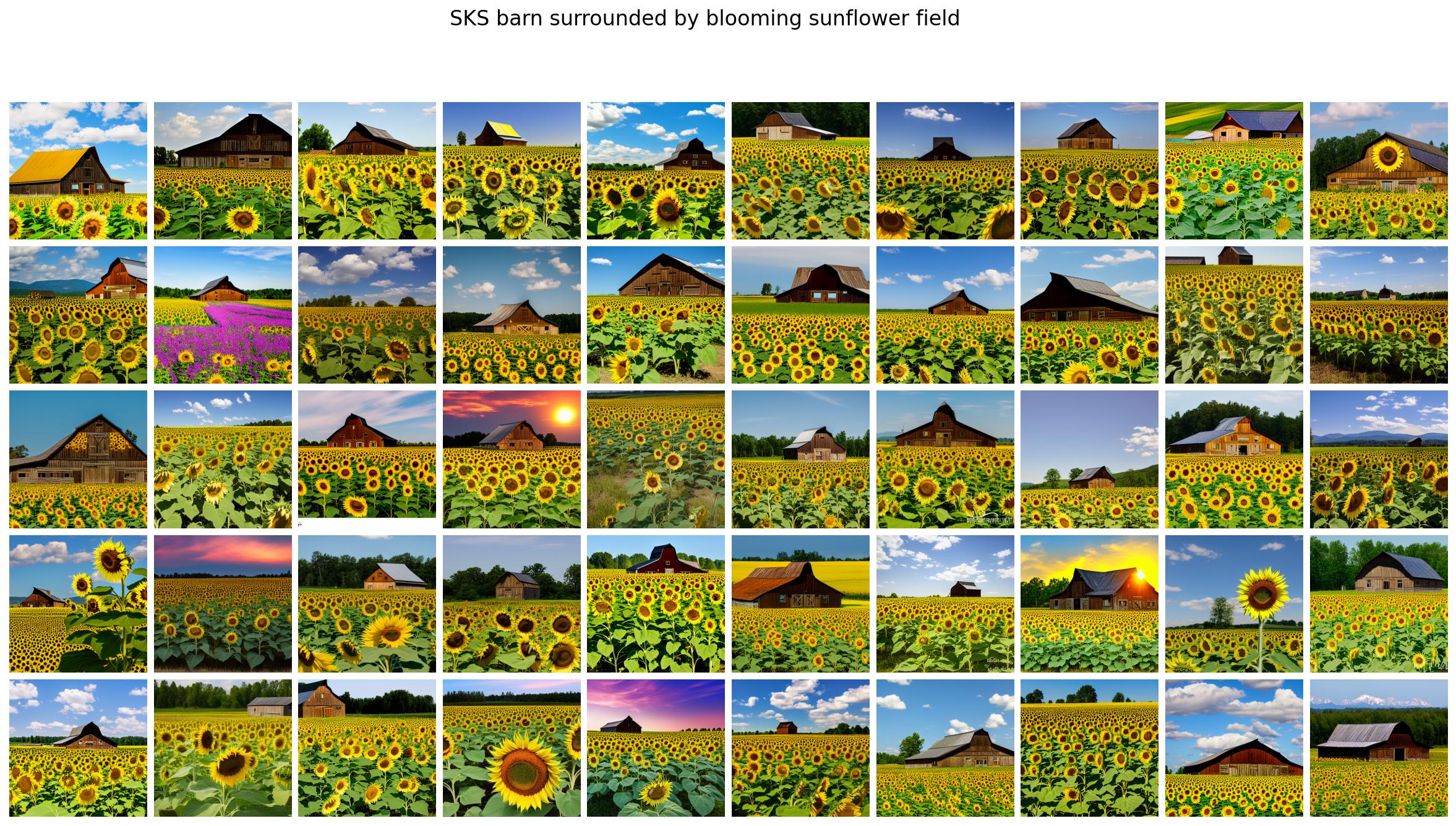

Surprisingly, when we apply TEA to DreamBooth models poisoned by Anti-DreamBooth, we observe exactly that: a mitigation effect where the generated images by TEA are less distorted and more aligned with the to-be-protected concept \(V^*\), as shown in the figure below.

This finding reveals an intriguing false sense of security in Anti-DreamBooth: despite adversarial masking, the poisoned personalized model still retains traces of the correct/to-be-protected concept \(V^*\), which can be partially recovered by TEA. To the best of our knowledge, this is the first work to uncover such a counter-intuitive vulnerability of Anti-DreamBooth.

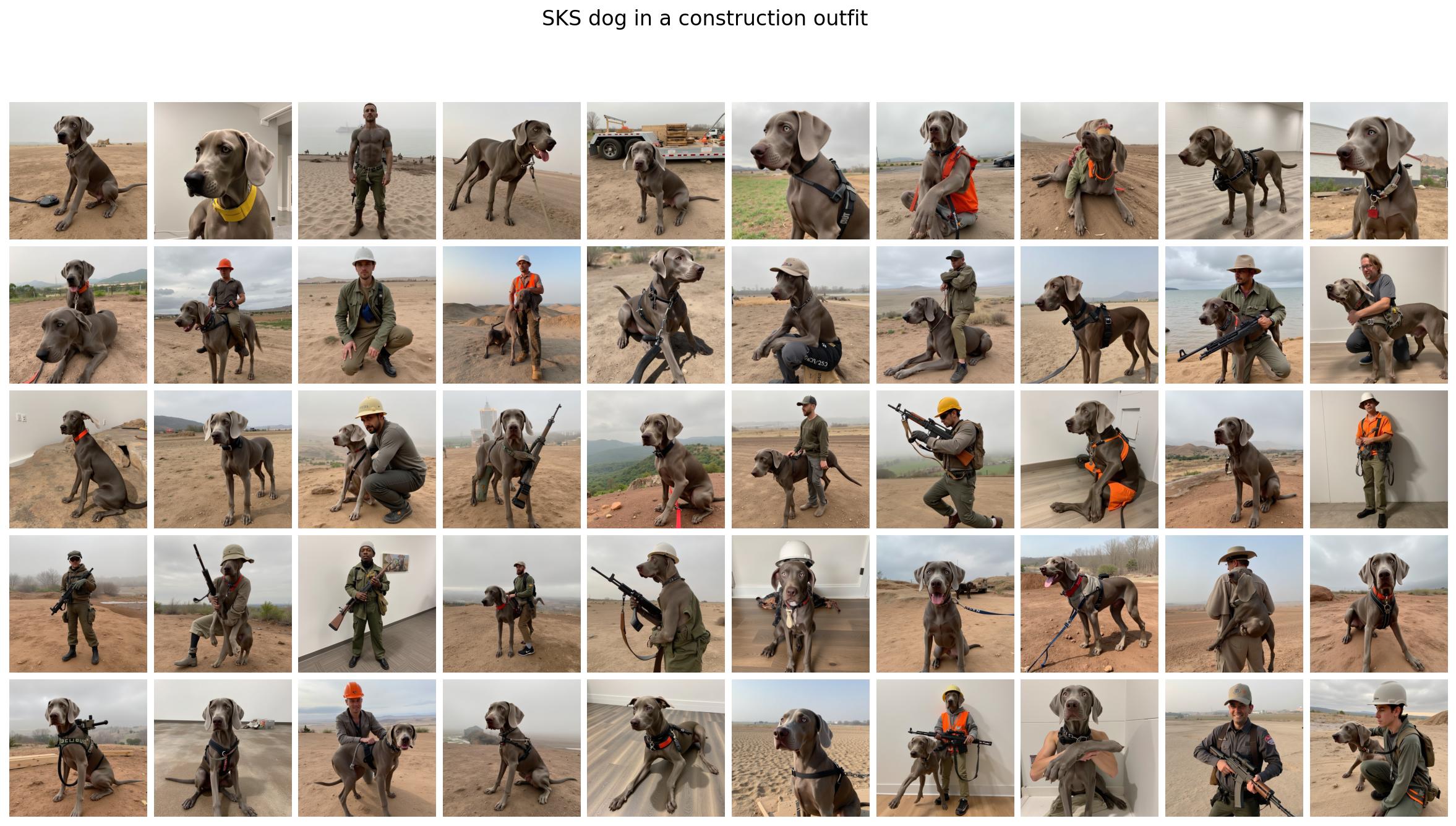

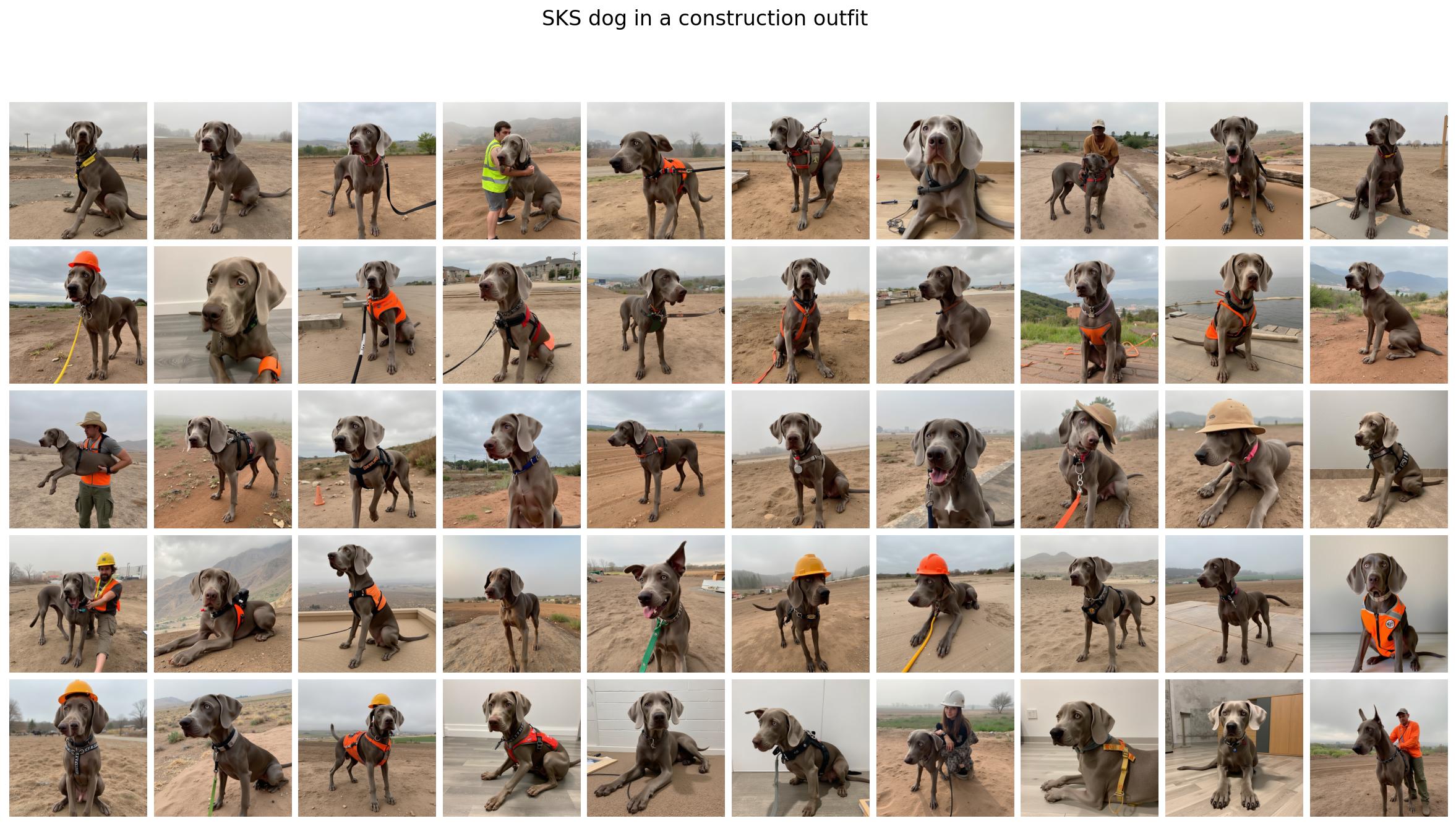

Experiments

We demonstrate the effectiveness of TEA on addressing the SCP across six representative and recent personalization methods, two architectures (Stable Diffusion and Flux) and three datasets (CS101, CelebA, and Relationship) consisting of total 22 concepts. Please refer to the paper for more details. I really proud of the results and the simplicity, generalizability, and effectiveness of TEA :D.

Some qualitative results are shown below when our TEA is applied to SOTA personalization methods.

Citation

If you find this work useful in your research, please consider citing our paper

@article{bui2025mitigating,

title={Mitigating Semantic Collapse in Generative Personalization with Test-Time Embedding Adjustment},

author={Bui, Anh and Vu, Trang and Le, Trung and Kim, Junae and Abraham, Tamas and Omari, Rollin and Kaur, Amar and Phung, Dinh},

journal={The Fourteenth International Conference on Learning Representations (ICLR) 2026},

year={2025}

}

References

[1] Ruiz, Nataniel, et al. “Dreambooth: Fine tuning text-to-image diffusion models for subject-driven generation.” Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 2023.

[2] Motamed, Saman, Danda Pani Paudel, and Luc Van Gool. “Lego: Learning to Disentangle and Invert Personalized Concepts Beyond Object Appearance in Text-to-Image Diffusion Models.” arXiv preprint arXiv:2311.13833 (2023).

[3] Huang, Jiannan, et al. “Classdiffusion: More aligned personalization tuning with explicit class guidance.” arXiv preprint arXiv:2405.17532 (2024).

[4] Han, Ligong, et al. “Svdiff: Compact parameter space for diffusion fine-tuning.” Proceedings of the IEEE/CVF International Conference on Computer Vision. 2023.

[5] Qiu, Zeju, et al. “Controlling text-to-image diffusion by orthogonal finetuning.” Advances in Neural Information Processing Systems 36 (2023): 79320-79362.

[6] Avrahami, Omri, et al. “Break-a-scene: Extracting multiple concepts from a single image.” SIGGRAPH Asia 2023 Conference Papers. 2023.

[7] Jin, Chen, et al. “An image is worth multiple words: Discovering object level concepts using multi-concept prompt learning.” Forty-first International Conference on Machine Learning. 2024.