A Learning Log

Too much personal stuff, don't read it!

Learning targets

This is a learning log to keep track of what I have learned each day. To avoid distraction and fluctuation, I will set some long-term learning targets that needed a disciplined and consistent effort to achieve.

- #Research (including #AML, #GenAI): learn more about research, reading papers, especially in the field of adversarial machine learning and generative AI.

- #Coding: learn more about coding, especially data structures and algorithms.

- #F4T: food for thought, learn more about philosophy, history, and humanity.

- #Finance: learn more about finance, investment, and business.

- #Productivity: learn how to be more productive and effective in work and life. (effective != efficient, outcome != output, proactive > active > reactive, 1.01^365 = 37.8, 0.99^365 = 0.03)

2023-10-03

(#Coding) How to change Github Page theme to a new one?

I was quite happy with the AcademicPages theme which is more than enough to show Publications or simple Blog posts. However, when I want to blog more seriously, the AcademicPages theme shows some limitations (or maybe because I didn’t dig deep enough). For example, it does not support image captioning, the table of contents is not automatically generated, the code block is also not highlighted properly, etc. Therefore, I tried to change to new theme, and, I had been falled in love with the al-folio theme which has all the features that I need. The only problem is that I don’t know how to change the theme without losing all the previous posts. The installing instruction is quite simple but just for a new blog created from scratch. After some googling and trying, I found a solution that works for me. Here are the steps:

- clone the new theme to a new folder, for example,

al-folio - manually convert all the posts from the old theme to the new theme. Yeah, it’s painful but I don’t know any other way to do it manually. The

postlayout inal-foliois a bit different with that inAcademicPages - run

bundle exec jekyll serveto check if everything is ok - run

bundle exec jekyll build --lsito build the site. All the contents will be generated in the_sitefolder. - remove all the contents belong to the old theme in the

masterbranch and copy all the contents in the_sitefolder to themasterbranch. - push the

masterbranch to Github and wait for a few minutes for the Github Page to be updated.

(#Coding) How to create a draft post in Jekyll? Source

- Create a

_draftsfolder in the root directory of the Github Page. - Create a new post in the

_draftsfolder. The post don’t need to have a date in the file name. - Run

bundle exec jekyll serve --draftsto serve the draft posts.

(#Coding) 75 Leetcode Questions

I start a challenge to complete 75 Leetcode questions in less than 75 days. I will log my progress in this blog post.

2023-09-28

(#Finance) The First Home Super Saver Scheme is the best way to save for a deposit by Kuan Tian

- The First Home Super Saver Scheme (FHSSS) is a scheme that allows you to save money for your first home inside your superannuation fund. The benefit of this scheme is that you can save money faster because of the tax benefit of superannuation fund.

- The maximum amount of money that you can save is 50K AUD as increased since 2020.

- The maximum amount of money that you can withdraw is 15K AUD per contribution year. It means that to withdraw the maximum amount of money, you have to contribute to your superannuation fund for 4 years.

- The benefit via this scheme can be around $11K AUD for a single person contributing for 4 years compared to saving money in a bank account.

- A couple can save up to 100K AUD via this scheme and get a benefit of around $$22K AUD.

(#Finance) FIRE for Aussies by Kuan Tian

- FIRE stands for Financial Independence, Retire Early.

- Unlike in US or UK, Aussies can take advantage of the superannuation fund to retire early. It is because the superannuation fund is a tax-advantage fund with a tax rate of 15% compared to the marginal tax rate of 32.5% for the income between 45K AUD and 120K AUD.

- The superannuation fund can be accessed when you reach the preservation age (between 55 and 60 depending on your date of birth).

- You can contribute up to 25K AUD per year to your superannuation fund, including the employer contribution. For example, a Research Fellow with a salary of 100K AUD per year and 17% employer contribution can self contribute up to 8K AUD per year and get a tax benefit of 17.5% of 8K AUD = 1.4K AUD.

- There are tax-free components and taxable components in the super. When withdrawing money from the super, the tax-free components are mostly tax-free, while the taxable components are taxed depending on several factors such as type of withdrawal (lump sum or income stream), age, the element taxed or untaxed, etc.

- Take-away advice: Contribute as much as you can to your superannuation fund.

(#Finance) How to reduce tax via property investment by Kuan Tian again. The third video in just one morning, I am falling into a rabbit hole because his videos are really good. (Who doesn’t love ![]() , especially a procrastinated almost-graduated PhD student

, especially a procrastinated almost-graduated PhD student ![]() )

)

- The main idea is to use the tax benefit of negative gearing to reduce the tax.

- Negative gearing is a tax benefit that allows you to deduct the loss from your investment property from your taxable income. For example, if you have a salary of 100K AUD per year and a loss of 10K AUD from your investment property, then your taxable income is 90K AUD.

- The loss from your investment property can be the interest of your loan, the cost of maintenance, etc, minus the rental income.

- This strategy is good for high-income earners especially when you are in the highest tax bracket (45% for the income above 180K AUD).

2023-09-27

(#Research) A new perspective on the motivation of VAE (by Dinh)

- Assume that \(x\) was generated from \(z\) through a generative process \(p(x \mid z)\).

- Before observing \(x\), we have a prior belief about \(z\), i.e., \(z\) can be sampled from a Gaussian distribution \(p(z) = \mathcal{N}(0, I)\).

- After observing \(x\), we want to correct our prior belief about \(z\) to a posterior belief \(p(z \mid x)\).

- However, we cannot directly compute \(p(z \mid x)\) because it is intractable. Therefore, we use a variational distribution \(q(z \mid x)\) to approximate \(p(z \mid x)\). The variational distribution \(q(z \mid x)\) is parameterized by an encoder \(e(z \mid x)\). The encoder \(e(z \mid x)\) is trained to minimize the KL divergence between \(q(z \mid x)\) and \(p(z \mid x)\). This is the motivation of VAE.

Mathematically, we want to minimize the KL divergence between \(q_{\theta} (z \mid x)\) and \(p(z \mid x)\):

\[\mathcal{D}_{KL} (q_{\theta} (z \mid x) \parallel p(z \mid x) ) = \mathbb{E}_{q_{\theta} (z \mid x)} \left[ \log \frac{q_{\theta} (z \mid x)}{p(z \mid x)} \right] = \mathbb{E}_{q_{\theta} (z \mid x)} \left[ \log q_{\theta} (z \mid x) - \log p(z \mid x) \right]\]Applying Bayes rule, we have:

\[\mathcal{D}_{KL} (q_{\theta} (z \mid x) \parallel p(z \mid x) ) = \mathbb{E}_{q_{\theta} (z \mid x)} \left[ \log q_{\theta} (z \mid x) - \log p(x \mid z) - \log p(z) + \log p(x) \right]\] \[\mathcal{D}_{KL} (q_{\theta} (z \mid x) \parallel p(z \mid x) ) = \mathbb{E}_{q_{\theta} (z \mid x)} \left[ \log q_{\theta} (z \mid x) - \log p(x \mid z) - \log p(z) \right] + \log p(x)\] \[\mathcal{D}_{KL} (q_{\theta} (z \mid x) \parallel p(z \mid x) ) = - \mathbb{E}_{q_{\theta} (z \mid x)} \left[ \log p(x \mid z) \right] + \mathcal{D}_{KL} \left[ q_{\theta} (z \mid x) \parallel p(z) \right] + \log p(x)\]So, minimizing \(\mathcal{D}_{KL} (q_{\theta} (z \mid x) \parallel p(z \mid x) )\) is equivalent to maximizing the ELBO: \(\mathbb{E}_{q_{\theta} (z \mid x)} \left[ \log p(x \mid z) \right] - \mathcal{D}_{KL} \left[ q_{\theta} (z \mid x) \parallel p(z) \right]\).

Another perspective on the motivation of VAE can be seen from the development of the Auto Encoder (AE) model.

- The AE model is trained to minimize the reconstruction error between the input \(x\) and the output \(\hat{x}\).

- The AE process is deterministic, i.e., given \(x\), the output \(\hat{x}\) is always the same.

- Therefore, the AE model does not have contiguity and completeness properties as desired in a generative model.

- To solve this problem, we change the deterministic encoder of the AE model to a stochastic encoder, i.e., instead of mapping \(x\) to a single point \(z\), the encoder maps \(x\) to a distribution \(q_{\theta} (z \mid x)\). This distribution should be close to the prior distribution \(p(z)\). This is the motivation of VAE.

2023-09-23

(#Finance) Reading My solopreneur story: zero to $$45K/mo in 2 years

This is a very inspring story about how Tony Dinh built his side project to a profitable software product and become a solopreneur. Some key takeaways:

- The unfair advantage: What is the unfair advantage? To me, it is the thing that you can do better than others in the same field and it is hard for others to catch up. In Tony’s case when the goal is to build a profitable software product, at first I thought that his unfair advantage is his software engineering skills. It is no doubt that Tony has a great skill set that enables him to build a product really fast. However, when reading his story again, I realized that his true unfair advantage is his ability to write and share his knowledge and experiences and from that build up his follower-community. It can be seen that his very first product DevUtils started to fly when he discovered Twitter and started to share funny interesting stuff on that platform. Compared to other software engineers who might have the same tech skills as Tony, Tony has a much better skill in writing and sharing, while compared to other writers, Tony has a much better skill in software engineering. So his unfair advantage in getting a profitable software product is the combination of these two skill sets. So what is my unfair advantage? In the context of being a researcher? I am still on the way to find out.

- Start small, commit to the process, and be consistent. Tony’s first “real” business - Black Magic is a very interesting story and a good demonstration for this lesson. The product at first was very simple which is just a bar on Twitter’s avatar that shows progress on getting 1k followers. He did that because of a very human reason: to celebrate of getting hist first 1k followers. It turned out that people loved this idea and even happy to pay for the subscription. Tony started to get recurring revenue from that. However, he didn’t stop there. He kept improving the product and adding more features to it that help users create more engagements. The product became more and more complex and useful that attracted more users. After just few months, the Black Magic from a fun side project became a profitable business generate 4K USD per month. This story brings me a lot of thoughts. First, think big but start small and do not wait to be perfect. Second, be committed and consistent to the process.

(#F4T) How to get rich (without getting lucky) by Naval Ravikant. Some I like with my current knowledge and experience:

- You will get rich by giving society what it wants but does not yet know how to get. At scale.

- Play iterated games. All the returns in life, whether in wealth, relationships, or knowledge, come from compound interest.

- Arm yourself with specific knowledge, accountability, and leverage.

- About specific knowledge:

- Specific knowledge is knowledge that you cannot be trained for. If society can train you, it can train someone else, and replace you.

- When specific knowledge is taught, it’s through apprenticeships, not schools.

- Specific knowledge is often highly technical or creative. It cannot be outsourced or automated.

- Work as hard as you can. Even though who you work with and what you work on are more important than how hard you work.

(#F4T) Less is More principle

2023-09-22

(#Research) On Reading: FLOW MATCHING FOR GENERATIVE MODELING (ICLR 2023)

- Link to the paper: https://openreview.net/pdf?id=PqvMRDCJT9t

- Link to my blog post: https://tuananhbui89.github.io/blog/2023/flowmatching/

2023-09-17

(#Research) Parameter-Efficient Fine-Tuning. Link to Youtube video

(#Research) Tree of Thoughts: Deliberate Problem Solving with Large Language Models. Link to Yannic’s video

- From Chain-of-Thought, Self-Consistency CoT, to Tree of Thoughts.

- Intuition: LLMs are good at self-evaluation given a specific goal, for example, given a set of several intermediate thoughts, which one is the best to achieve the goal. So, we can integrate classical tree search algorithm (BFS, DFS, etc.) with LLMs to find the best path to achieve the goal.

- The paper is also good at experimental design when choosing specific tasks to best fit to the algorithm, i.e., Ga

2023-09-14

(#Research) On Reading: Diffusion Models Beat GANs on Image Synthesis. Link to blog post

2023-09-08

(#Idea) Erasing a concept from a generative model by given a set of images.

2023-09-04

(#Research) Chasing General Intelligence by Dr. Rui Shu (OpenAI) - Guest lecture at Monash FIT 3181 - Deep Learning Unit.

Disclaimer: Rui gave a great talk and I want to take notes on it. However, because of detaching from the context, this note not necessarily reflects Rui’s opinions.

Part 1: Rui’ Research Journey:

- Inspired by the Kingma’s VAE paper (2014) (style-content disentanglement)

- First research project: Extend VAE to semi-supervised learning setting.

- Second research project: Domain Adaptation: DIRT-T

- First thought: Take a field people care about and then make some sort of improvement. Safe approach in research.

Part 2: What is going wrong?

- Why those things work?

- NNs generalization makes zero sense:

- Understanding Deep Learning requires rethinking generalization (Zhang et al. 2017): You can train on random labels and the model still clusters the data sensibly before fitting to random labels.

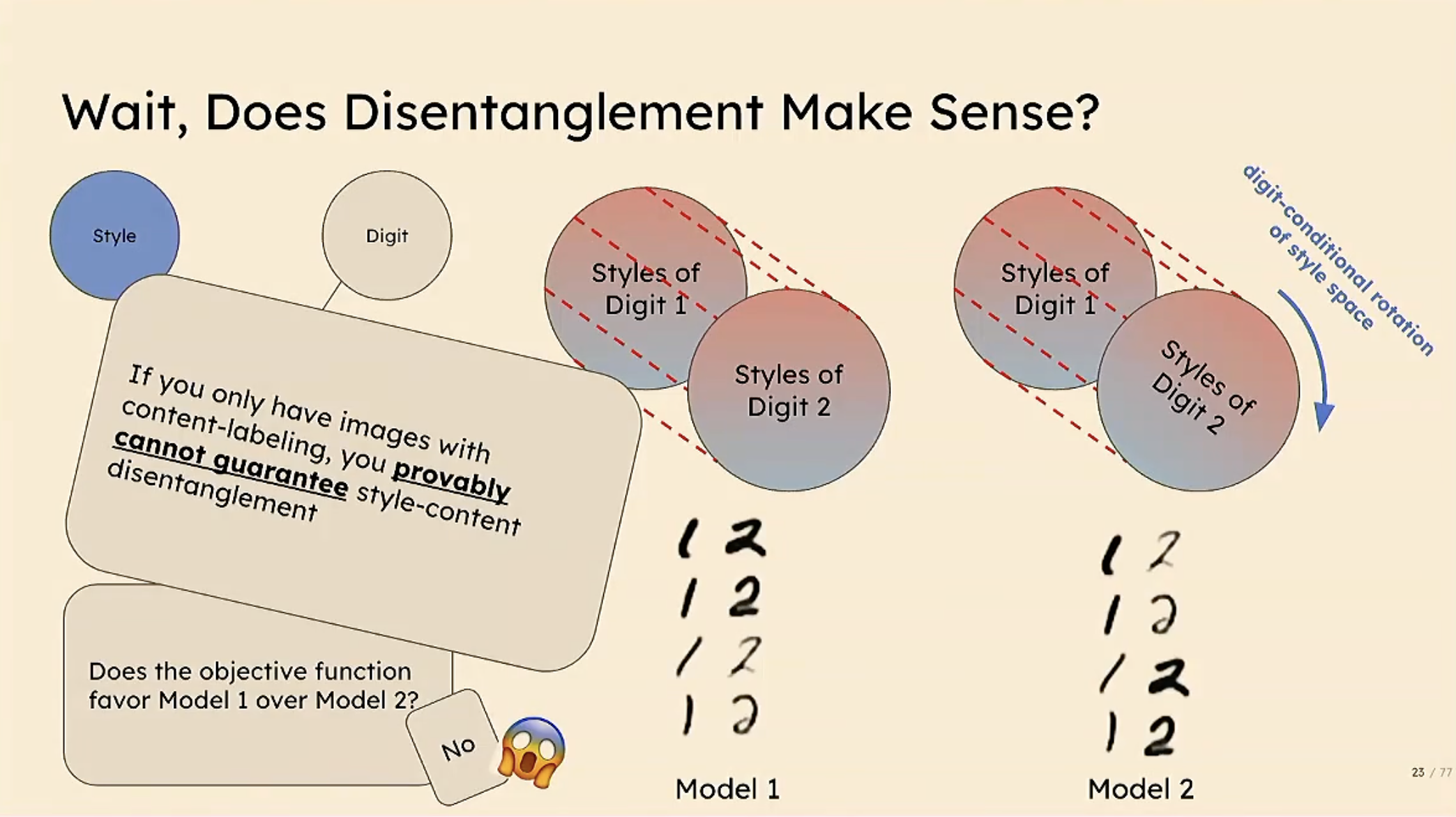

- Second thought: What are other fields in ML that works but not in the way we think it works? Style-content disentanglement.

- Challenging Common Assumptions in the Unsupervised Learning of Disentangled Representations

Part 3:

Yang Song’s paper on estimating gradient of data distribution to learn generative model

- Generative Modeling by Estimating Gradients of the Data Distribution (Song et al. 2019)

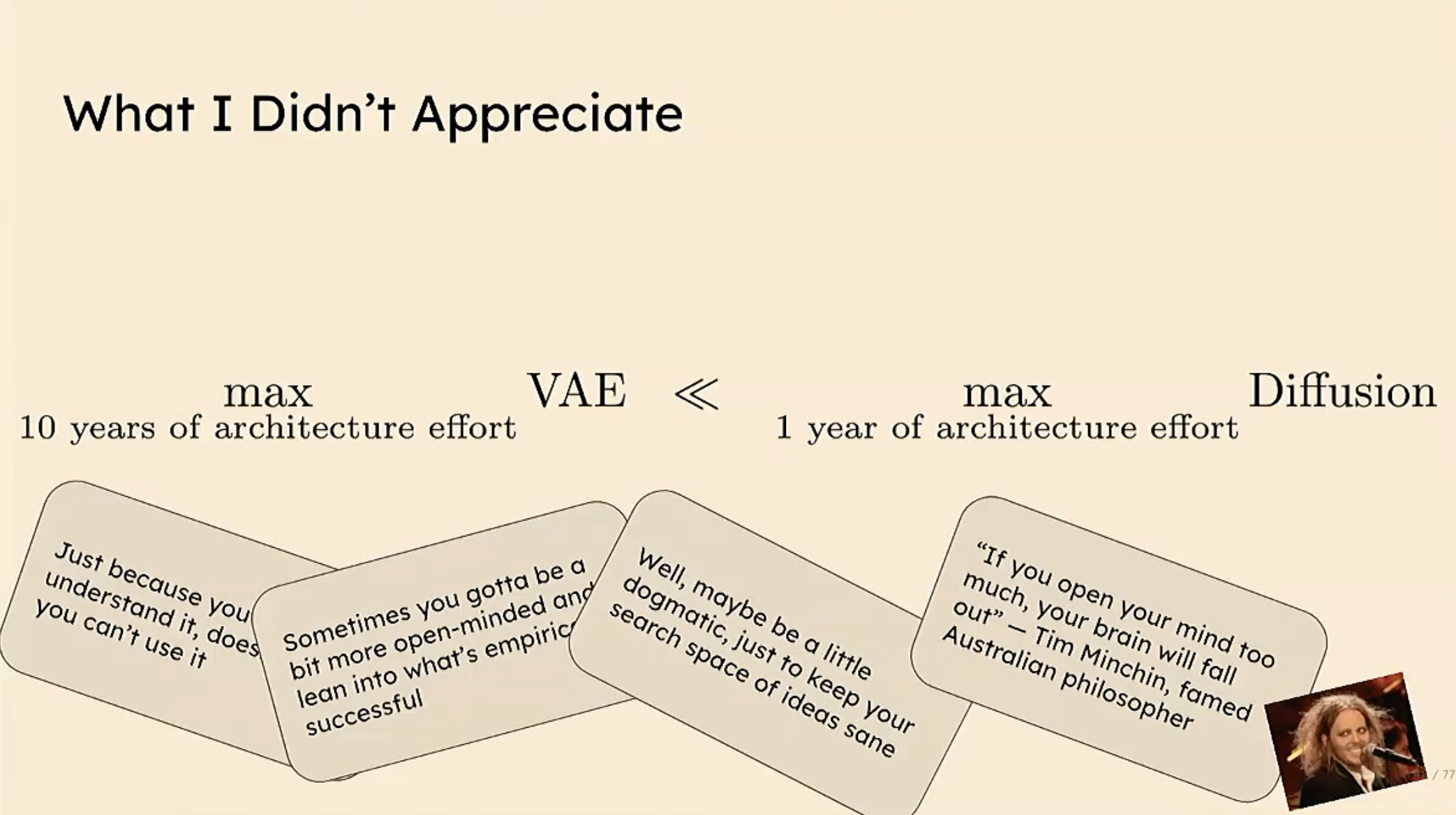

- Sensitive to the choice of the model architecture which makes Rui thought that it might not going to far compared to VAEs or GANs. And he then really regretted about this thought. Because this paper is the foundation of the current hype of Generative Models, i.e., Diffusion Models.

Rui’s thought after his lesson on Diffusion Model:

- Just because you don’t understand it, doesn’t mean you can’t use it.

- Sometimes you gotta be a bit more open-minded and appreciate methods which have shown empirical success.

- But also be critical and not open-minded too much.

Where are we now?

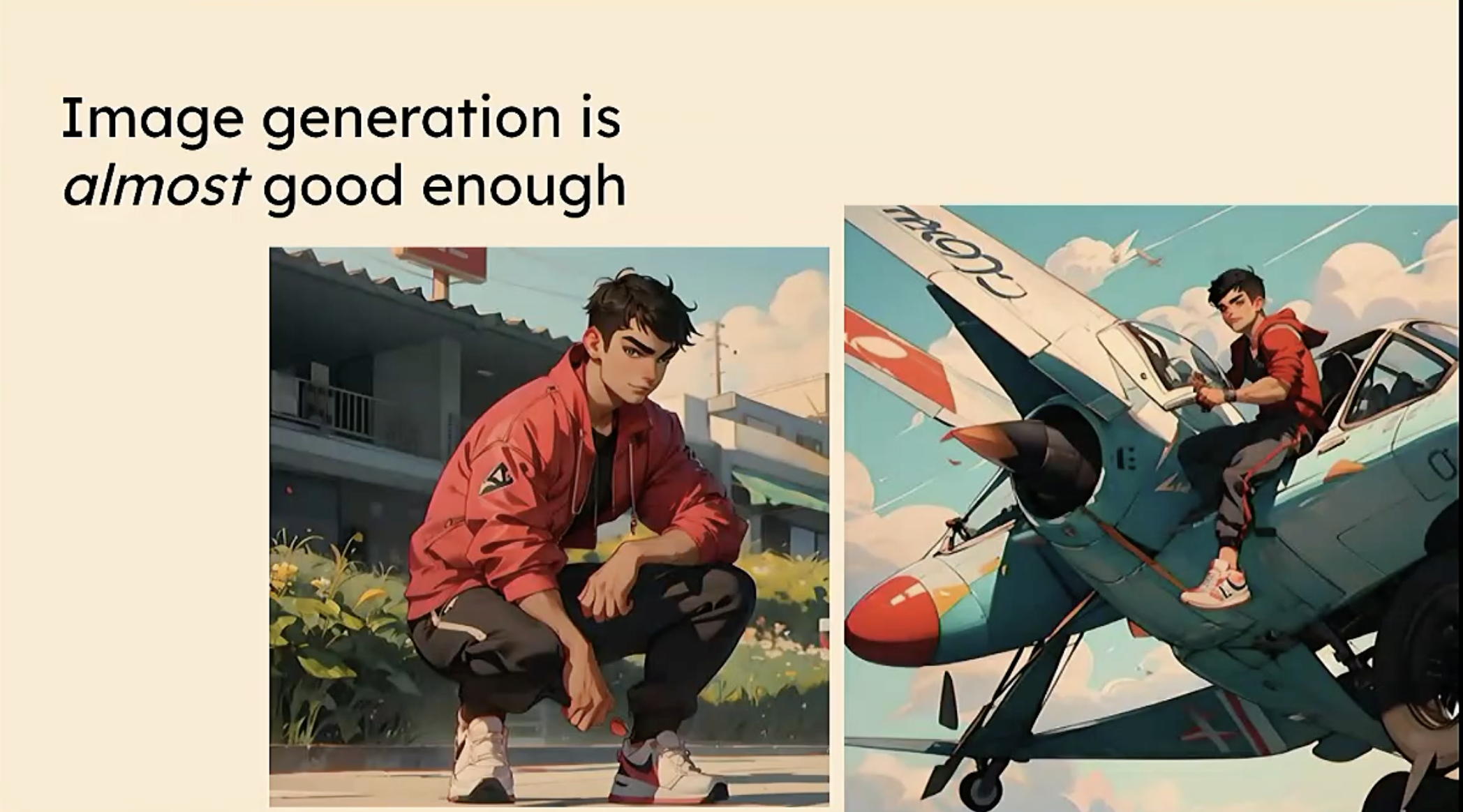

- Image gen is quite good now, what about text? Transformer paper.

- Transformer powers GPT-4, and change the mantra “Garbage in, garbage out” to “Garbage in, Diamond out”.

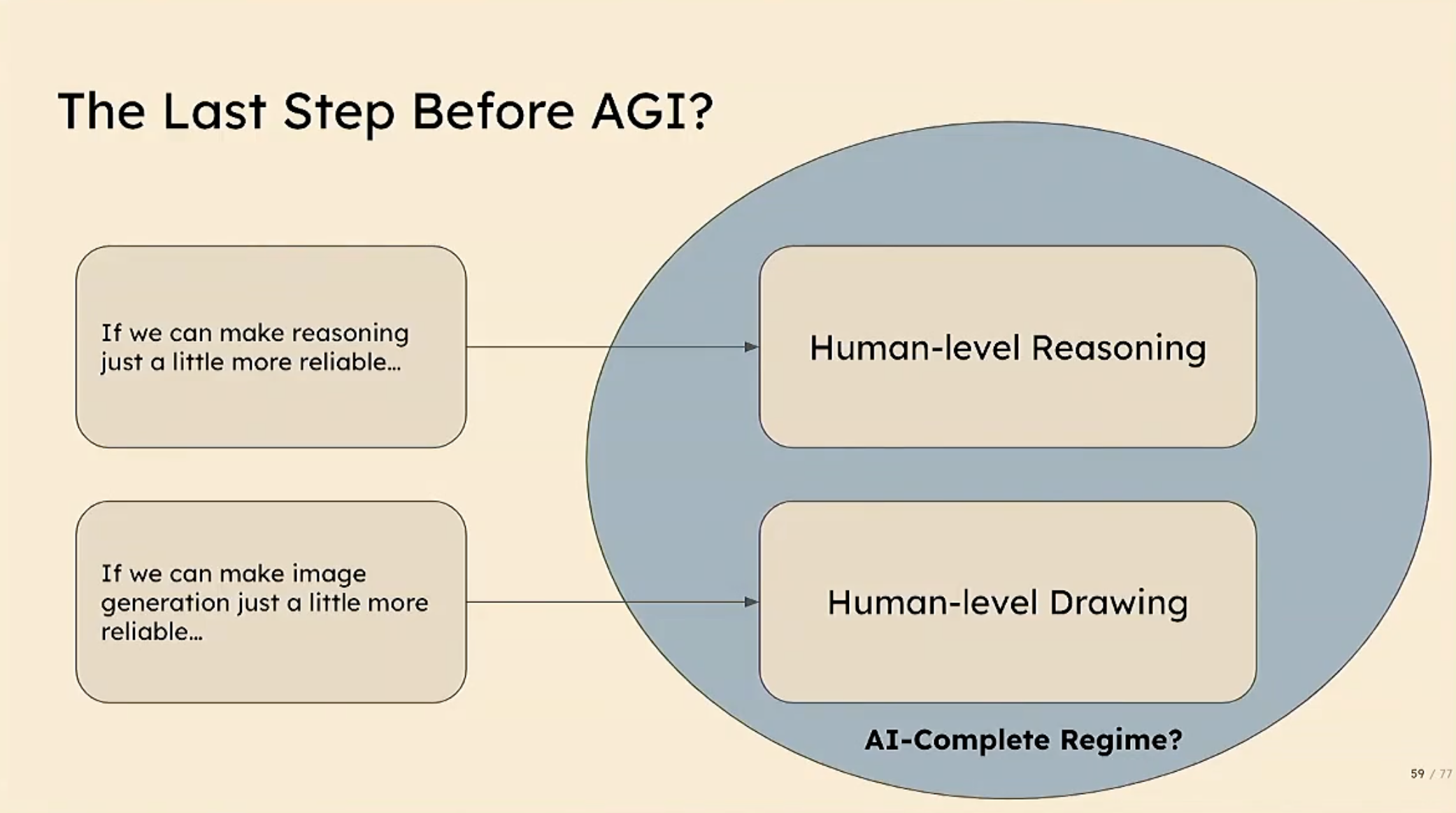

- We are really close to AGI but not solve yet. GPT-4, ChatGPT sometime shows responses that look like it has reasoning ability but it is not really.

- The last step before AGI: Reasoning? But it is the hardest step.

How to get there?

- The large everything model

- Multimodality: Image, Text, Speech, Audio, Sensor data, etc.

- How we design all types of data modalities into a single model?

- Which requires a lot of engineering effort.

- The future of data synthesis:

- Using data generated by the model and then improve the model itself, after filter (or Human-in-the-loop). Including human knowledge into the process is the key! (Yan Lecun also mentioned that Language model will never achieve AGI but Language model with human-in-the-loop (RFHL) is other thing).

The inferefence heavy future?

- So important research direction is to make model’s inference more efficient.

Final Advice

- Learn probability theory and applied probability. (Build a strong background/foundation)

- Learn experimental design and how to science (e.g., Adversarial examples are not bugs, they are features. Where the authors proposed way to explore the way model making prediction based on invisible features)

- Familiar with working with big codebase (Learn modern skills)

- Keep thing simple (inspired by Rich Sutton’s The Bitter Lesson): The human-knowledge approach tends to complicated methods in the way that makes them less suited to leverage the power of computation. The simple approach which can be scaled up to large data and large computation is better.

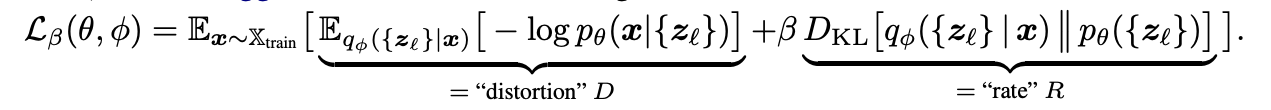

(#Research) Connection between Latent Diffusion formulation and the Rate-Distortion theory (Trung’s idea). Below are some personal notes for memorization without leaking the idea.

- The Rate-Distortion theory is a theory that describes the trade-off between the rate of the latent code and the distortion of the reconstruction. The higher the rate, the more information of \(x\) is preserved in \(z\). However, if the rate is high, it lessen the generalization ability of the \(\log p(x \mid z, \theta)\).

- In latent diffusion formulation, the distortion \(D\) is measured on the first latent code \(z_1\) encoded from \(x\) by the encode-decoder architecture.

- The rate \(R\) measures the different between the forward process and the backward process in diffusion model, eventually it is the matching term that trains the diffusion model.

- There is a closed-form of the mutual information \(I(Z,X)\). We can utilize this formulation to design a specific regularization term to control the rate-distortion trade-off benefiting downstream tasks.

2023-09-01

(#Research) On reading: TRADING INFORMATION BETWEEN LATENTS IN HIERARCHICAL VARIATIONAL AUTOENCODERS published on ICLR 2023.

Revisit Rate-Distortion trade-off theory:

- Problem setting of Rate-Distortion trade-off

- How to learn a “useful” representation of data for downstream tasks?

- Using powerful encoder-decoder such as VAE, PixelCNN, etc. can easily ignore \(z\) and still obtain high marginal likelihood \(p(x \mid \theta)\). Therefore, we need to use a regularization term to encourage the encoder to learn a “useful” representation of \(z\), for example, as in Beta-VAE.

Rate distortion theory?

\[H - D \leq I(z,x) \leq R\]where \(H\) is the entropy of data \(x\) and \(D\) is the distortion of the reconstruction \(x\) from \(z\). \(R\) is the rate of the latent code \(z\) (e.g., compression rate).

\(R = \log \frac{e(z \mid x)}{m(z)}\) where \(e(z \mid x)\) is the encoder and \(m(z)\) is the prior distribution of \(z\). The higher the rate, the more information of \(x\) is preserved in \(z\). However, if the rate is high, it lessen the generalization ability of the \(\log p(x \mid z, \theta)\).

The mutual information has upper bound by the rate of the latent code \(z\). For example, if \(R=0\) then \(I(z,x)=0\). This is because \(e(z \mid x) = m(z)\), which means that the encoder cannot learn anything from the data \(x\).

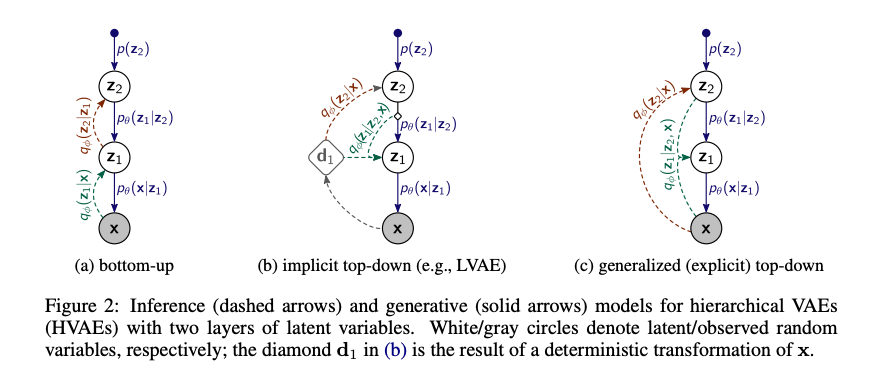

Motivation of the paper:

- Reconsider the rate distortion theory in the context of hierarchical VAEs where there are multiple levels of latent codes \(z_1, z_2, \dots, z_L\).

- The authors proposed a direct links between the input \(x\) and the latent codes \(z_1, z_2, \dots, z_L\). With this architecture, they can decompose the total rate to the rate of each latent code \(z_1, z_2, \dots, z_L\). Unlike the standard hierarchical VAEs, where the rate of each latent code is not directly related to the input \(x\) but the previous latent code \(z_{l-1}\).

- Then they can control the rate of each latent code.

Standard hierarchical VAEs:

Generalized Hierarchical VAEs:

2023-08-30

(#Research) NVIDIA event: Transforming Your Development and Business with Large Language Models

- Link to the event: https://transformingthefuturelargelang.splashthat.com/

- Speaker: Dr. Ettikan Kandasamy Karuppiah and Dr. Johan Barthelemy

Introduction, Demystifying LLM and Data Curation

- Except researchers, now almost everyone (developers) just care about API provided by Big Tech, i.e., OpenAI ChatGPT API?

- GenAI Ecosystem: Language, Media, Biology, Tools and Platforms (1600+ companies building their GenAI on Nvidia platform)

- Ratio of various data that LLMs are trained on. Ref.

LLM Training and Inference at Scale. Customized LLM with Prompt-Learning

- Why do data curation? E.g., Duplication, Low quality, Bad unicode

- NeMo provides tools to quality filtering or reformating.

- Currently, the data curator works on CPUs only.

- Data Blending: Learnings from Bloomberg GPT, use specific data, even small amount of data does help alot to customize to specific domain, application (i.e., Bloomberg GPT for finance)

- 3D parallelism techniques: Data parallelism, Tensor and Pipeline parallelism, Sequence parallelism or Selective Adaptative Recomputation?

- Auto-Configuarator tool: Auto search and optimize model configurations on any given compute or time constraints. (something like recommendation given an experience precomputed on a common used model, i.e., GPT3, not something like can optimize on-time, e.g., changing learning rate, optimizer based on data and current performance).

- Most important part of the tool is supporting model customization (see the slide)

- Prompt learning: Prompt tuning, p-tuning, tune companion model

- prompt engineering: few-shot learning, chain-of-thought reasoning

- Parameter efficient fine-tuning: LoRA, IA3, Adapters

- Fine-tuning: SFT, RLHF

Questions:

- What is Nvidia platform that alot companies based on?

- If every developers use the same API, where is the difference between them? If using the same suggestions from GenerativeAI?

- Free GPU? Does Nvidia provide any free GPU or support for research?

Nvidia Framework:

- NeMo https://developer.nvidia.com/nemo

- BioNeMo

- Picasso

2023-08-26

(#F4T) Review 10 best ideas/concepts from Charlie Munger. Link to the blog post: https://tuananhbui89.github.io/blog/2023/f4t/

2023-08-25

(#Research) Data-Free Knowledge Distillation

- Reference: Data-Free Model Extraction

- What is Data-Free KD? It is a method to transfer knowledge from a teacher model to a student model without using any data. The idea is learn a generator that can generate synthetic data that is similar to the data from the teacher model. Then, we can use the synthetic data to train the student model. \(L_S = L_{KL} (T(\hat{x}), S(\hat{x}))\)

Where \(T(\hat{x})\) is the teacher model and \(S(\hat{x})\) is the student model. \(\hat{x}\) is the synthetic data generated by generator \(G\).

\[L_G = L_{CE} (T(\hat{x}), y) - L_{KL} (T(\hat{x}), S(\hat{x}))\]Where \(y\) is the label of the synthetic data. Minimizing first term encourages the generator generate data that fall into the target class \(y\), while maximizing the second term encourages the generator generate diverse data? Compared to GAN, we can think both teacher and student models are acted as discriminators.

This adversarial game need to intergrate to the training process in each iteration. For example, after each iteration, you need to minimizing \(L_G\) to generate a new synthetic data. And then using \(\hat{x}\) to train the student. This is to ensure that the synthetic data is new to the student model. Therefore, one of the drawbacks of DFKD is that it is very slow.

2023-08-21

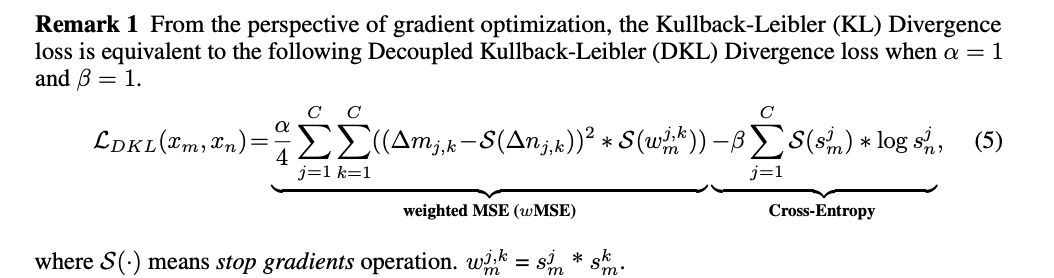

(#Research) On reading: Decoupled Kullback-Leibler Divergence Loss.

- Paper link: https://arxiv.org/abs/2305.13948

- Main idea: Minimize the difference between two logit distributions (weighted MSE loss)

2023-08-20

(#Research) On reading: Classifier-Free Diffusion Guidance.

- Paper link: https://arxiv.org/abs/2207.12598

- Motivation: Training a diffusion model to generate images from a specific class. Dhariwal \& Nichol (2021) proposed classifier guidance method, which uses an auxiliary classifier

- Previous work (i.e., Classifier Guided Diffusion) train a classifier \(f_\phi (y \mid x_t,t)\) on noisy images x and use the gradient \(\nabla_{x} log f_{\phi}(x_t)\) to guide the sampling process towards the target class y. However, this method requires a classifier jointly trained with the diffusion model.

(#Idea) Mixup Class-Guidance Diffusion model.

- Main idea: Train a diffusion model that can generate not only images from a specific class but also images from a mixup of two classes. It can be applied to Continual Learning setting as in DDGR or Domain Generalization setting.

- Nice way to generate mixup labels with just y and lambda. Normally, we need to have two classes \(y_i,y_j\) and a parameter \(\lambda\) to generate a mixup label \(y_{mixup} = \lambda y_i + (1-\lambda) y_j\). However, we can have another way to generate mixup label, with one label \(y\), and one parameter \(\gamma\) to control the mixup ratio. For example, given a set of all labels \(Y=\{ y\_i \}\_{i=1}^N\), we can arrange them in a circle, and then we can generate a mixup label \(y_{mixup}\) by moving \(\gamma\) steps clockwise from \(y\). What is the benefit of this method?

- Recall the equation in Classifier-Free Guidance paper \(\nabla_{x_t} \log p(y|x_t)=\nabla_{x_t} \log p(x_t|y) - \nabla_{x_t} \log p(x_t)\), where \(\nabla\_{x\_t} \log p(y \mid x\_t)\) is the gradient of the implicit classifier.

- In case of mixup label, we have \(\nabla_{x_t} \log p(y_{mixup}|x_t)=\nabla_{x_t} \log p(x_t|y_{mixup}) - \nabla_{x_t} \log p(x_t)\), where \(y_{mixup} = \gamma y_i + (1-\gamma) y_j\), which requires two gradients \(\nabla_{x_t} \log p(y_{i} \mid x_t)\) and \(\nabla_{x_t} \log p(y_{j} \mid x_t)\).

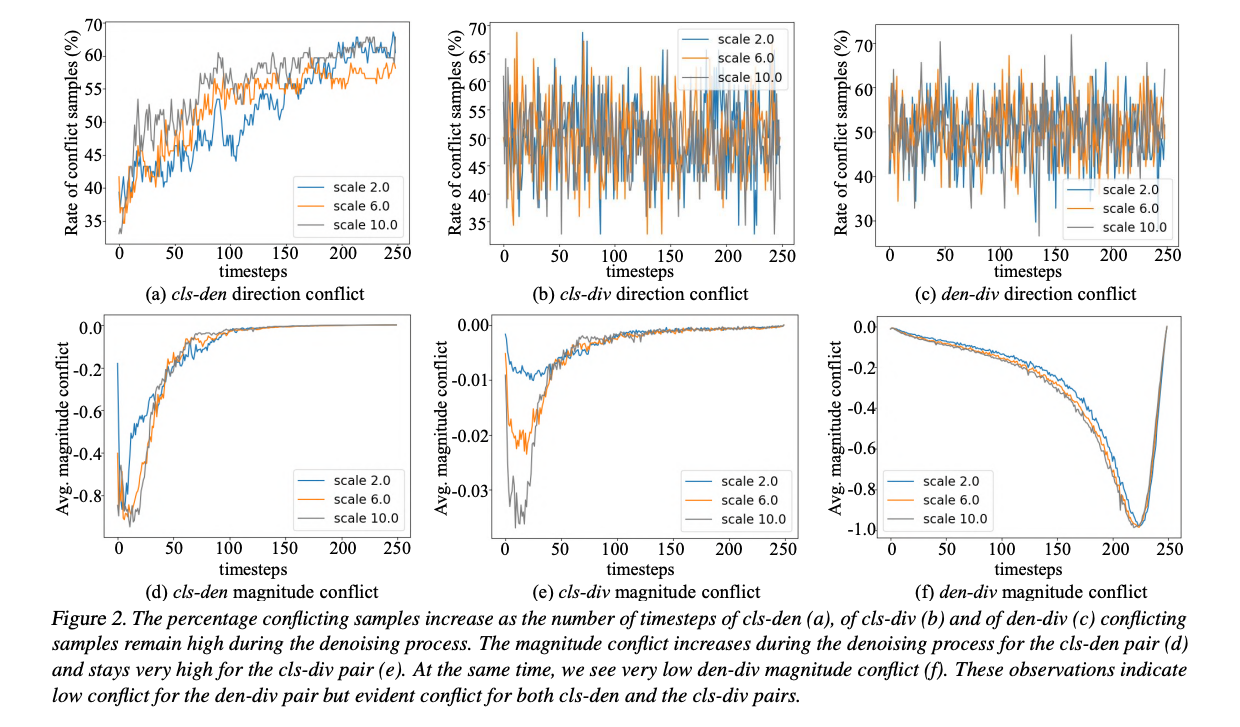

- Some observations/ideas from other work can be applied:

- PixelAsParam: A Gradient View on Diffusion Sampling with Guidance: In this paper, the authors observed that the gradient of the auxiliary classifier \(\nabla_{x_t} \log p(y \mid x_t)\) and the gradient of the denoising process \(\nabla_{x_t} \log p(x_t)\) are conflicting (refer to Figure 2). It can be interpreted as the gradient of the auxiliary classifier is trying to move the sample towards a target class, while the gradient of the denoising process is trying to make the sample more diverse, i.e., their goals are contradictory. In this paper, they applied a multi-objective optimization method to project the gradient of auxiliary classifier onto a direction that is less conflicting. The result is that the generated images are in target class but still diverse. (better in both FID - distinguish between synthetic and real images - and Inception Score - distinguish between different classes of synthetic images). The time when the conflicting happens is also interesting. It is more conflicting at the beginning of the sampling process, and then it becomes less conflicting.

- Mixing between background and foreground using FFT/IFFT transformation.

2023-08-19

(#Coding) How to show an image in Github page. Reference to this post: https://tuananhbui89.github.io/blog/2023/learn-code/

2023-08-18

(#Research) On Reading: DDGR: Continual Learning with Deep Diffusion-based Generative Replay

- Paper link: https://openreview.net/pdf?id=RlqgQXZx6r

- Problem setting: Continual learning, more specific, task incremental learning. For example, CIFAR100 dataset with new task is each 5 classes to be learned sequentially.

- Questions:

- Do we know the number of tasks in advance?

- Do we need to change the model architecture for each task?

- Can we access the data from previous tasks? Normally, we cannot. But there is a variant of CL called CL with repitition (or replay) where we can access the data from previous tasks but in different forms.

- Main Idea: Using Class-Guidance Diffusion model to generate data from previous tasks to be used to train classifier and also reinforce the generative model.

- The main point is that the Diffusion model has some advantages over GAN or VAE in specific CL setting:

- It does not have mode collapse problem compared to GAN.

- It can generate (overfitting) data from previous tasks quite well.

- It has class-guidance mechanism that can guild diffusion model to learn new distribution from new task and not overlapping with previous tasks. (so generator doesn’t face a serious catastrophic forgetting problem)

(#Idea) We can use Class-Guidance Diffusion model to learn mixup data and then can use that model to generate not only data from pure classes but also from mixup classes. It is well accepted that mixup technique can improve the generalization of classifier, so it can be applied to CL setting as well.

2023-08-17

(#Code) How to disable NSFW detection in Huggingface.

- context: I am trying to generate inappropriate images using Stable Diffusion with prompts from the I2P benchmark. However, the NSFW detection in Huggingface is too sensitive and it filters out all of the images, and return a black image instead. Therefore, I need to disable it.

- solution: modify the pipeline_stable_diffusion.py file in the Huggingface library. just return image and None in the run_safety_checker function.

# line 426 in the pipeline_stable_diffusion.py

def run_safety_checker(self, image, device, dtype):

return image, None

# The following original code will be ignored

if self.safety_checker is None:

has_nsfw_concept = None

else:

if torch.is_tensor(image):

feature_extractor_input = self.image_processor.postprocess(image, output_type="pil")

else:

feature_extractor_input = self.image_processor.numpy_to_pil(image)

safety_checker_input = self.feature_extractor(feature_extractor_input, return_tensors="pt").to(device)

image, has_nsfw_concept = self.safety_checker(

images=image, clip_input=safety_checker_input.pixel_values.to(dtype)

)

return image, has_nsfw_concept

(#Idea, #GenAI, #TML) Completely erase a concept (i.e., NSFW) from latent space of Stable Diffusion.

- Problem: Current methods such as ESD (Erasing Concepts from Diffusion Models) can erase quite well a concept from the Stable Diffusion. However, recent work (Circumventing Concept Erasure Methods for Text-to-Image Generative Models) has shown that it is possible to recover the erased concept by using a simple Textual Inversion method.

- Firstly, personally, I think that the approach in Pham et al. (2023) is not very convincing. Because, they need to use additional data (25 samples/concept) to learn a new token associated with the removed concept. So, it is not surprising that they can generate images with the removed concept. It is becaused of the power of the personalized method, not because of the weakness of the ESD method. It would be better if we can compare performance on recovering concept A (concept A is totally new to the base Stable Diffusion model such as your personal images) on two models: a SD model injected with concept A and a model fine-tuned with concept A and then erased concept A and then injected concept A back. If the latter model can not generate images with concept A better than inject concept A directly to the base model, then we can say that the ESD method is effective.

2023-08-16

(#Research) The Inaproppriate Image Prompts (I2P) benchmark.

- Including 4703 unique prompts to generate inappropriate images with Stable Diffusion. There are combinations of 7 categories including: hate, harrassment, violence, self-harm, sexual, shocking and illegal activities.

- Research paper: Safe Latent Diffusion: Mitigating Inappropriate Degeneration in Diffusion Models, CVPR 2023.

- Huggingface page: https://huggingface.co/datasets/AIML-TUDA/i2p

2023-08-14

(#Research) Some trends in KDD 2023: Graph Neural Networks and Casual Inference from Industrial Applications.

(#Research) Graph Neural Networks, definition of neighborhood aggregation. Most of GNN methods work on million of nodes, to scale to billion of nodes, there are a lot of tricks under the hood (from Dinh’s working experience in Trustingsocial).

(#Research) (With Trung and Van Anh) We derive a nice framework that connect data-space distributional robustness (as in our ICLR 2022 paper) and model-space distributional robustness (as in SAM).

2023-08-08

(#Research) On reading: Erasing Concepts from Diffusion Models (ICCV 2023). https://erasing.baulab.info/

(#Research) On reading: CIRCUMVENTING CONCEPT ERASURE METHODS FOR TEXT-TO-IMAGE GENERATIVE MODELS. Project page: https://nyu-dice-lab.github.io/CCE/

2023-08-06

(#Coding) Strange bug in generating adversarial examples using Huggingface.

- Context: I am trying to implement similar idea as in the Anti-Dreambooth project to generate adversarial perturbation for the Textual Inversion project. However, I got this bug which cost me 2 days to figure out.

- Bug: the gradient on the input tensor is None, even required_grad is set to True.

- Cause: Because of the Huggingface accelerator. (Ref: gradient_accumulation). The accelerator is to help to accelerate the training process by accumulating the gradient over multiple batches. It requires the model and the train dataloader to be prepared with function

accelerator.prepare(). However, in our case, we do not use the train dataloader (see the blog post about Anti-Dreambooth). Therefore, when we still use the accelerator.accumulate() in the training loop, the gradient is accumulated over multiple batches, and the gradient on the input tensor is None. - Fix: remove the accelerator.accumulate() in the training loop. No it doesn’t work!

2023-08-05

(#Coding) Understand the implementation of the Anti-Dreambooth project. Ref to the blog post

2023-08-04

(#Research) Three views of Diffusion Models:

- Probabilistic view point as in DDPM

- Denoising Score matching

- Stochastic Differential Equations (SDE) view point (which is the most general one)

2023-08-03

(#Research) Trusted Autonomous Systems

- Trusted Autonomous Systems (TAS) is Australia’s first Defence Cooperative Research Centre.

- There are many trustworthy related projects undergoing in this center.

- Reference: https://tasdcrc.com.au/

2023-08-01

(#Research) Helmholtz Visiting Researcher Grant

- https://www.helmholtz-hida.de/en/new-horizons/hida-visiting-program/

- 1-3 months visiting grant for Ph.D. students and postdocs in one of 18 Helmholtz centers in Germany.

- Deadline: 16 August 2023 and will end on 15 October 2023.

- CISPA - Helmholtz Center for Information Security https://cispa.de/en/people

2023-07-31

(#Research) Australia Research Council (ARC) Discovery Project (DP) 2023.

- The ARC DP is a very competitive grant in Australia.

- List of successful funded projects in 2023: https://rms.arc.gov.au/RMS/Report/Download/Report/1b0c8b2e-7bb0-4f2d-8f52-ad207cfbb41d/243

- This is a good source to find potential postdoc positions or Ph.D. scholarships as well as to find potential collaborators.

- For example, regarding Trustworthy Machine Learning, there are two projects including Dinh’s project.

2023-07-30

() First Home Buyer Super Saver Scheme.

- Save mony for first home buyer inside superannuation fund. Apply 15% tax rate instead of marginal tax rate. When withdraw, apply another 15% tax rate. Each individual can save up to 50k for cross all years.

2023-07-27

(#GenAI) How to run textual inversion using Huggingface library locally without login to Huggingface with token. Including:

- How to install git-lfs on Linux to download large files from Github. Reference: https://github.com/git-lfs/git-lfs/blob/main/INSTALLING.md

- How to download pretrained model from Huggingface model hub. Reference: https://huggingface.co/docs/hub/models-downloading

- Setup environment to run textual inversion locally. Setup dependencies.

- Setup script

2023-07-24

(#Productivity) How to present a slide and take notes on the same screen simultaneously (e.g., it is very useful when teaching or giving a talk). At Monash, the lecture theatre has MirrorOp installed on all screens that can connect wirelessly with a laptop but it is not convenient when we want to take notes.

- Best solution: connect Ipad to the screen and use Ipad to present the slide. We can also see the slide’s note on the Ipad (required an adapter USB-C to HDMI and a HDMI cable).

- Alternative solution: join Zoom meeting on personal computer (for presentation) and on Ipad (for taking notes) and share screen from Ipad on Zoom if needed. PC can connect to the screen using HDMI cable or MirrorOp.

2023-07-23

Micromouse competition.

- First introduced by Claude Shannon in 1950s.

- At the begining, it was just a simple maze solving competition. However, after 50 years of growing and competing, it has become a very competitive competition with many different categories: speed, efficiency, size. And along with its, many great ideas have been introduced and applied to the competition. It involes many different fields: mechanical, electrical, software, and AI all in just a small robot.

- The Fosbury Flop in high jump. When everyone use the same jump technique, the performance becomes saturated. Then Fosbury introduced a new technique (backward flop) that no one had ever thought of before. And it became the new standard (even named after him). This phenomenon also happens in the Micromouse competition.

- The two most important game changing ideas in the history of micromouse competition: capability to diagonal movement and using fan (vacumn) to suck the mouse to the path so that the mouse can move faster as in a racing car.

Reference:

- The Fastest Maze-Solving Competition On Earth by Veritasium.

- The Fosbury Flop—A Game-Changing Technique

2023-07-22

(#Parenting) ATAR and university admission.

(#AML) Rethinking Backdoor Attacks, Mardy’s group. https://arxiv.org/pdf/2307.10163.pdf

(#Productivity) How to synchonize PowerPoint in Teams among multiple editers working simultaneously (i.e., share file in Teams, and open file in local using PowerPoint), in this way can retain the math equations.

If you have some math equations in your PowerPoint, and open it on Google Slide, the equations will be converted to images. If you accidently sync the file with your original file, all math equations will be lost.

2023-07-21

(#F4T) You cannot solve a problem with the same thinking that created it. Albert Einstein.

Context: Our lab had a workshop last week and Dinh gave a talk about his favorite book “The 7 habits of highly effective people”. One of the habits is “Sharpen the saw” means that you always need to improve yourself from all aspects: physical, mental, spiritual, and social. That is the way you can overcome your limits and obstacles that you are facing.

- You cannot solve a research problem in your thesis with the same knowledge that you have when starting your thesis. You need to grow and learn.

- If you want to upgrade for your paycheck, you need to learn new skills, new knowledge before applying for a new job.

(#Experience) The first department metting as a new Research Fellow.

Context: I have just started my new position as a RF at the Department of Data Science and AI, Monash University. Today is the first time to be exposed to what really happen beyond student’s perspective.

- Teaching matter (because new semester is about to start)

- Head of departmemt’s presentation about current activities (especially hiring and open positions, and budget)

- Ph.D. student recruitment, how competitive it is and how to rank the candidates (some insights: academic record and research record)